|

Saturday, October 24, 2009

The other day I saw a flier for a colloquium in my department that sounded kind of interesting, but I thought "It probably won't be worth it," and I ended up not going. After all, anyone with an internet connection can find a cyber-colloquium to participate in -- and drawn from a much wider range of topics (and so, one that's more likely to really grab your interest), whose participants are drawn from a much wider range of people (and so, where you're more likely to find experts on the topic -- although also more know-nothings who follow crowds for the attention), and whose lines of thought can extend for much longer than an hour or so without fatiguing the participants.

So, this is something like the Pavarotti Effect of greater global connectedness: local opera singers are going to go out of business because consumers would rather listen to a CD of Pavarotti. It's only after it becomes cheap to find the Pavarottis and distribute their work on a global scale that this type of "creative destruction" will happen. Similarly, if in order to get whatever colloquia gave them, academics migrated to email discussion groups or -- god help you -- even a blog, a far smaller number of speakers will be in demand. Why spend an hour of your time reading and commenting on the ideas of someone you see as a mediocre thinker when you could read and comment on someone you see as a superstar? Sure, perceptions differ among the audience, so you could find two sustained online discussions that stood at opposite ends of an ideological spectrum -- say, biologists who want to see much more vs. much less fancy math enter the field. That will prevent one speaker from getting all the attention. But even here, there would be a small number of superstars within each camp, and most of the little guys who could've given a talk here or there before would not get their voices heard on the global stage. Just like the lousy local coffee shops that get displaced by Starbucks -- unlike the good locals that are robust to invasion -- they'd have to cater to a niche audience that preferred quirkiness over quality. So the big losers would be the producers of lower-quality ideas, and the winners would be the producers of higher-quality ideas as well as just about all consumers. Academics wear both of these hats, but many online discussion participants might only sit in and comment rather than give talks themselves. It seems more or less like a no-brainer, but will things actually unfold as above? I still have some doubts. The main assumption behind Schumpeter's notion of creative destruction is that the firms are competing and can either profit or get wiped out. If you find some fundamentally new and better way of doing something, you'll replace the old way, just as the car replaced the horse and buggy. If academic departments faced these pressures, the ones who made better decisions about whether to host colloquia or not would grow, while those who made poorer decisions would go under. But in general departments aren't going to go out of business -- no matter how low they may fall in prestige or intellectual output, relative to other departments, they'll still get funded by their university and other private and public sources. They have little incentive to ask whether it's a good use of money, time, and effort to host colloquia in general or even particular talks, and so these mostly pointless things can continue indefinitely. Do the people involved with colloquia already realize how mostly pointless they are? I think so. If the department leaders perceived an expected net benefit, then attendance would be mandatory -- at least partial attendance, like attending a certain percent of all hosted during a semester. You'd be free to allocate your partial attendance however you wanted, just like you're free to choose your elective courses when you're getting your degrees -- but you'd still have to take something. The way things are now, it's as though the department head told its students, "We have several of these things called elective classes, and you're encouraged to take as few or as many as you want, but you don't actually have to." Not exactly a ringing endorsement. You might counter that the department heads simply value making these choices entirely voluntary, rather than browbeat students and professors into attending. But again, mandatory courses and course loads contradict this in the case of students, and all manner of mandatory career enhancement activities contradict this in the case of professors (strangely, "faculty meetings" are rarely voluntary). Since they happily issue requirements elsewhere, it's hard to avoid the conclusion that even they don't see much point in sitting in on a colloquium. As they must know from first-hand experience, it's a better use of your time to join a discussion online or through email. The fact that colloquia are voluntary gives hope that, even though many may persist in wasting their time, others will be freed up to more effectively communicate on some topic. Think of how dismal the intellectual output was before the printing press made setting down and ingesting ideas cheaper, and before strong modern states made postage routes safer and thus cheaper to transmit ideas. You could only feed at the idea-trough of whoever happened to be physically near you, and you could only get feedback on your own ideas from whoever was nearby. Even if you were at a "good school" for what you did, that couldn't have substituted for interacting with the cream of the crop from across the globe. Now, you're easily able to break free from local mediocrity -- hey, they probably see you the same way! -- and find much better relationships online. Labels: academia, Economics, education, Technology

Saturday, September 19, 2009

I recently listened to a radio program which featured the topic of "e-memory." The guests are promoting their book, Total Recall: How the E-Memory Revolution Will Change Everything. Here's part of the description:

Total Recall provides a glimpse of the near future. Imagine heart monitors woven into your clothes and tiny wearable audio and visual recorders automatically capturing what you see and hear. Imagine being able to summon up the e-memories of your great grandfather and his avatar giving you advice about whether or not to go to college, accept that job offer, or get married. The range of potential insights is truly awesome. But Bell and Gemmell also show how you can begin to take better advantage of this new technology right now. From how to navigate the serious questions of privacy and serious problem of application compatibility to what kind of startups Bell is willing to invest in and which scanner he prefers, this is a book about a turning point in human knowledge as well as an immediate and practical guide. First, there were callers who objected that this development was against our nature. It was frankly the rather standard Luddite position. In fact the same arguments which seem to crop up societies which are experiencing the first flushes of mass literacy are being recycled. The arrival of the printing press witnessed the decline of the ancient techniques of Ars Memorativa, so surely much will be lost. I don't dismiss all objections to the utility of technology, everything has its limits. Telecommunications has not, and will not, replace face-to-face communication in many situations or contexts for most people. But the same stock objections seem rather tired after a few thousand years. And yet I wonder if this is that big of a deal. How many people memorize their friends' phone numbers in the age of the cellphone? There are already things you don't have to memorize like you did in the past. But facts stored outside of our brains exist a la carte, as opposed to being embedded in a network of implicit connections. To generate novel insight these connections and networks of facts need to exist latent as background conditions underneath reflective thought. Of course for most people novel abstractions, analyses and streams of data are irrelevant. So a perfect record of one's personal life and relationships may change a great deal. I have a rather good memory and would honestly appreciate it if others had has much recall about the details of individuals who they might have met years ago and such. Much less awkwardness. Labels: Technology

Thursday, August 27, 2009

Web 2.0 party is over -- you're going to pay for the news again, and hopefully more

posted by

agnostic @ 8/27/2009 12:52:00 AM

Recently at my personal blog I've been focusing on the idiocy of Web 2.0's central strategy for growth, namely creating online networks or communities where costly participation is given away for free. (The profitable online papers charge, YouTube and Facebook still not profitable, and a more general round-up of the second dot-com bust.) The hope was that hosting a free party with an open bar would attract a large crowd, and that this in turn would lead to ever-increasing ad revenues. That business model was doomed to failure during the first dot-com boom, and it is just as doomed during the second one (Web 2.0). In the meantime, following this strategy leads to cultural output typical of attention whores rather than the output of inventors and creators with secure patronage.

I was delighted today to discover that all of this is about to change. It's still pretty hush-hush -- no "buzz in the blogosphere" -- as I've read a fair number of articles on the topic, yet none has mentioned the coming change, even if they've mentioned the change earlier in the year. Starting sometime this fall, online newspapers will finally start to charge for access to their sites, although who they charge, how much, and in what manner (yearly, per article, etc.), is entirely up to the individual papers, and we don't know what shape that will take just yet. The business model of Journalism Online, the group that's spearheading the change, says they're aiming to get revenues from the top 10% of readers by visit frequency. In any case, the point is that the era of unlimited free access to online journalism is dead. Journalism Online seems to be a central hub that readers will go through to get to the various member organizations' publications, perhaps the way college students go through their university library's website to get access to various journals. According to co-founder Leo Hindery (as I heard on Bloomberg TV today), there are over 600 papers on board, and you can bet that includes most or all of the big ones, as they provide the best quality and yet receive no money from users (other than the FT and WSJ). All of the customer's payments will be kept track of through this one site. I don't have much more detail to give, since the Journalism Online website lays it out succinctly. Go read through the business model section and the press section (the 31-page PDF listed under "Industry Reports" is the most detailed). This is the first nail in the coffin of Web 2.0, and once the other give-it-away internet companies see how profitable it is to actually -- gasp! -- charge for your product, they will wake up from their pipe dream of growing by attracting a big crowd and pushing ads. YouTube, Facebook, MySpace, perhaps other components of Google, Wikipedia -- they can either charge and profit or get shoved out of the market by those who are growing by charging. The winners will have more to invest in improving their products and maybe even funding their industry's equivalent of basic R&D, we'll see a cultural output that won't pander quite so much to the lowest common denominator to chase ad revenue, and best of all -- the quality newspapers, social networking sites, and so on, will continue to exist and grow rather than be claimed as further casualties of the moronic dot-com boom mentality. At last the internet is sobering up from its 15-year Bender of Free. Labels: Economics, Media, Technology

Tuesday, August 25, 2009

At the end of an otherwise good reflection in the WSJ on where Google can go from here, we read the following:

It would be foolish to predict that Google won't have another business success, of course. Microsoft managed to leverage its strength in PC operating systems into a stranglehold over the word-processing and spreadsheet applications. Stan Liebowitz and Stephen Margolis debunked this at least 10 years ago in their book Winners, Losers, and Microsoft, and probably earlier, though I can't recall which journal article it originally appeared in. Scroll down to Figure 8.18 at Liebowitz's website, which shows the market share of Excel and Word in the Macintosh vs. Windows markets. They conclude: Examination of Figure 8.18 reveals that Microsoft achieved very high market shares in the Macintosh market even while it was still struggling in the PC market. On average, Microsoft's market share was about forty to sixty percentage points higher in the Macintosh market than in the PC market in the 1988-1990 period. It wasn't until 1996 that Microsoft was able to equal in the PC market its success in the Macintosh market. These facts can be used to discredit a claim sometime heard that Microsoft only achieved success in applications because it owned the operating system, since Apple, not Microsoft, owned the Macintosh operating system and Microsoft actually competed with Apple products in these markets. Labels: Economics, Technology

Tuesday, July 21, 2009

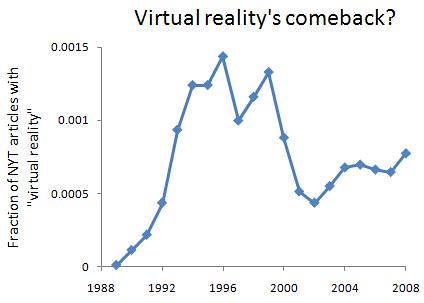

In the Angry Nintendo Nerd's video about the Virtual Boy -- a short-lived video game console that claimed to offer a "virtual reality" experience -- he says that back in the mid-1990s, it seemed like the coolest thing, but that now no one cares about virtual reality. This, he claims, is why even with better technology than before, no one is making virtual reality systems for the average consumer anymore. Certainly that seems true for pop culture: the Virtual Boy, the movies The Lawnmoer Man and The Matrix, Aerosmith's video for "Amazing," and a whole bunch of video games with "virtual" in the title came out then, vs. nothing like that now.

But when I went to check the NYT, I found a little surprise. Sure enough, there was a flaring up and dying down of the phrase that jibes with what we'd expect -- but there's been a modest yet steady increase in the phrase's usage since 2003. I skimmed the titles of the articles and didn't notice any clear pattern; maybe they're simply using it more in military training, and the news items are about that. Whatever it is, there's something to be explained. Not knowing anything about virtual reality, I'll leave it up to others to hazard a better guess. The graph of its appearance in the NYT is below the fold. Here's the first epidemic craze, followed by a recent increase:  Hmm. Labels: Technology

Wednesday, July 15, 2009

How soon businesses forget how loony the loony ideas of yesterday were

posted by

agnostic @ 7/15/2009 10:37:00 PM

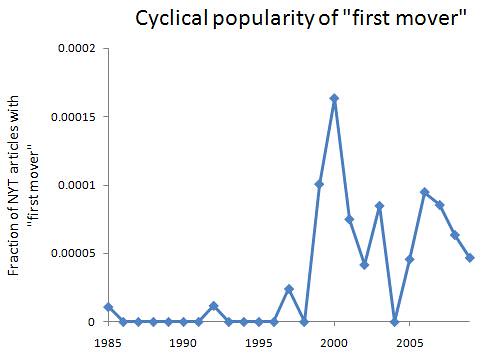

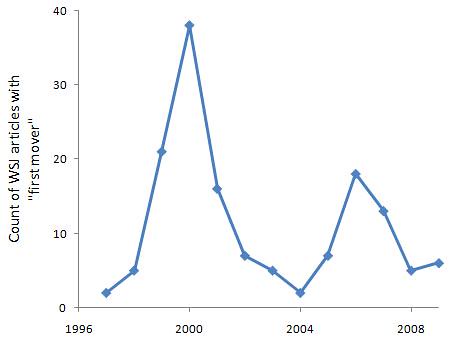

Mathematical models of contagious diseases usually look at how people flow between three categories: Susceptible, Infected, and Recovered. In some of these models, the immunity of the Recovered class may become lost over time, putting them back into the Susceptible class. This means that if an epidemic flares up and dies down, it may do so again. If we treat irrational exuberance as contagious, then we can have something like a recurring exuberant-then-gloomy cycle within people's minds. That is, people start out not having strong opinions either way, they get pumped up by hype, then they panic when they figure out that the hype had no solid basis -- but over time, they might forget that lesson and become ripe for infection once more.

I'm in the middle of Stan Liebowitz's excellent post-mortem of the dot-com crash, Re-thinking the Network Economy, and in Chapter 3 he reviews the "first mover wins" craze during the tech bubble. According to this idea, largely transplanted into the business world from economists who'd already spread the myth of QWERTY, the prospect of lock-in was so likely -- even if newcomers had a superior product -- that it paid to rush your product to the market first in order to get the snowball inevitably rolling, no matter its quality. The idea was bogus, of course, as everyone learned afterward. (There were plenty of examples available during the bubble, but the exuberance prevents people from seeing them -- Betamax was before VHS, WordPerfect was before Microsoft Word, Sega Genesis was before Super Nintendo, etc. And there were first-movers who won, if their products were highly rated. So, when you enter doesn't matter, although quality of product does.) But when I looked up data on how much the media bought into this idea, I was surprised (though not shocked) to see that it was resurrected during the recent housing bubble, although it has been declining since the start of the bust phase. Below the fold are graphs as well as some good representative quotes over the years. First, here are two graphs showing the popularity of the idea in the mainstream media. The first is from the NYT and controls for the overall number of articles in a given year. (I excluded a few articles that use "first mover" in reference to the Prime Mover god concept in theology.) I don't have the total number of articles for the WSJ, so those are raw counts. Still, the pattern is exactly the same for both, and it very suggestively reflects the two recent bubbles:   The first epidemic is easy enough to understand -- after languishing in academia during the mid-1980s through the mid-1990s, the ideas of path dependence, lock-in, and first-mover advantage caught on among the business world with the surge of the tech bubble. When it became apparent that the dot-coms weren't as solid as was believed (to put it lightly), everyone realized how phony the theory supporting the bubble had been. Here's a typical remark from 2001: WHEN they were not promoting the now-laughable myth of ''first mover advantage,'' early e-commerce proponents proffered the idea that self-service Web sites could essentially run themselves, with little or no overhead. But clear-headedness eventually wears off, and when another bubble comes along, we can't help but feel exuberant again and take another swig of the stuff that made us feel all tingly inside before. Here's a nugget of wisdom from 2006: Media chieftains may be kicking themselves a few years from now because they didn't step up to pay whatever it took to own the emergent first mover in online video.And a similar non-derogatory, non-ironic use of the phrase from 2007: For the current generation of Internet applications, sometimes referred to as "Web 2.0," the data collected from users is the true source of competitive advantage. And the first movers, the companies that understand and apply this insight, have services that get better fast enough that their competition never catches up. Thankfully we've been hearing less and less of this stupid idea ever since the housing bubble peaked, and at least the most recent peak was lower than the first one, but we can still expect to hear something like this during whatever the next bubble is. Note that the first-mover-wins idea wasn't even being applied primarily to real estate during the housing bubble -- the exuberance in one domain carried over into a completely unrelated domain where it had flourished before. So, if you're at all involved in the tech industry, be very wary during the next bubble of claims that "first mover wins" -- it wasn't true then (or then, or then), and it won't be true now. Labels: Economics, History, Technology

Monday, July 13, 2009

QWERTY-nomics debate thriving 20 years after "The Fable of the Keys"

posted by

agnostic @ 7/13/2009 02:05:00 AM

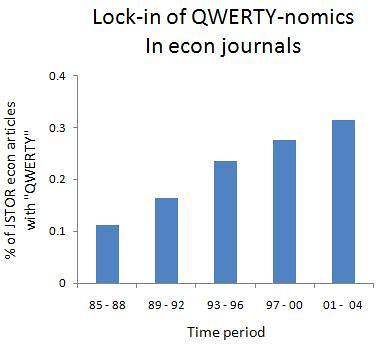

In 1990, Stan Liebowitz and Stephen Margolis wrote an article detailing the history of the now standard QWERTY keyboard layout vs. its main competitor, the Dvorak Simplified Keyboard. (Read it here for free, and read through the rest of Liebowitz's articles at his homepage.) In brief, the greatest results in favor of the DSK came from a study that was never officially published and that was headed by none other than Dvorak himself. Later, when researchers tried to devise more controlled experiments, the supposed superiority of the DSK mostly evaporated.

Professional typists may have enjoyed about a 5% faster rate, or maybe not -- despite the conviction of the claims you hear, this isn't a well established body of evidence, such as "smarter people have faster reaction times." Moreover, most keyboard users aren't professional typists, and the vast bulk of their lost time is due to thinking about what they want to say. Therefore, the standardization of the QWERTY layout is not an example of our being locked in to an inferior technology. Which isn't to say that the QWERTY layout is the best imaginable -- but certainly not a clearly inferior layout compared to the DSK. While Liebowitz and Margolis may have hoped that their examination of the evidence would have thrown some cold water on the "lock-in to inferior standards" craze that had gotten going in the mid 1980s, with QWERTY as the proponents favorite example, the idea appears too appealing to academics to die. (Read this 1995 article for a similar debunking of Betamax's alleged superiority over the VHS format.) Liebowitz appeared on a podcast show just this May having to reiterate again that the standard story of QWERTY is bogus. To investigate, I did an advance search of JSTOR's economics journals for "QWERTY" and divided this count by the total number of articles. This was done for five four-year periods because it's not incredibly popular in any year, and that creates more noise in a year-by-year picture. I excluded the post-2004 period since there's typically a 5-year lag between publication and archiving in JSTOR. This doesn't show what the author's take is -- only how in-the-air the topic is. With the two major examples having been shown to not be examples of inferior lock-in at all, you'd think the pattern would be a flaring up and then dying down as economists were made aware of the evidence, and everyone can just leave it at that. But nope:  Note that the articles here aren't the broad class discussing various types of path dependence or network effects, but specifically the kind that lead to inferior lock-in -- as signalled by the mention of QWERTY. I attribute the locking in of this inferior idea to the fact that academia is not incentivized in a way that rewards truth, at least in the social sciences. Look at how long psychoanalysis and Marxism were taken seriously before they started to die off in the 1990s. Shielded from the dynamics of survival-of-the-fittest, all manner of silly ideas can catch on and become endemic. In this case, the enduring popularity of the idea is accounted for by the Microsoft-hating religion of most academics and of geeks outside the universities. For them, Microsoft is not a company that introduced the best word processors and spreadsheets to date, and that is largely responsible for driving down software prices, but instead a folk devil upon which the cult projects whatever evil forces it can dream up. Psychologically, though, it's pretty tough to just make shit up like that. It's easier to give it the veneer of science -- and that's just what the ideas behind the QWERTY and Betamax examples were able to give them. Overall, Liebowitz's work seems pretty insightful. There's very little abstract theorizing, which modeling nerds like me may miss, but someone's got to take a hard-nosed look at what all the evidence says in support of one model or some other. He and Margolis recognized how empirically unmoored the inferior lock-in literature was early on, and they also saw how dangerous it had become when it was used against Microsoft in the antitrust case. [1] He also foresaw how irrational the tech bubble was, losing much money by shorting the tech stocks far too early in the bubble, and he co-wrote an article in the late 1990s that predicted The Homeownership Society would backfire on the poor and minorities it was supposed to help. (Read his recent article on the mortgage meltdown, Anatomy of a Train Wreck.) Finally, one of his more recent articles looks at how file sharing has hurt CD sales. Basically, he details everything that a Linux penguin shirt-wearer doesn't want to hear. [1] Their book Winners, Losers, and Microsoft and their collection of essays The Economics of QWERTY attack the idea from another direction -- showing how the supposed conditions for lock-in or market tipping were met, and yet time and again there was turnover rather than lock-in, with each successive winner having received the highest praise. Labels: culture, Economics, History, Technology

Thursday, July 02, 2009

In the new issue of The New Yorker, Malcolm Gladwell reviews some book about using the appeal of FREE to grow your business. This is supposed to apply most strongly to information, so that as more and more of a firm's product / service consists of information, the more it can use the appeal of FREE to earn money.

What both Gladwell and the reviewed book's author, Chris Anderson, don't seem to realize is that the appeal of FREE creates pathological behavior. Gladwell even cites a revealing behavioral economics experiment by Dan Ariely: Ariely offered a group of subjects a choice between two kinds of chocolate -- Hershey's Kisses, for one cent, and Lindt truffles, for fifteen cents. Three-quarters of the subjects chose the truffles. Then he redid the experiment, reducing the price of both chocolates by one cent. The Kisses were now free. What happened? The order of preference was reversed. Sixty-nine per cent of the subjects chose the Kisses. The price difference between the two chocolates was exactly the same, but that magic word "free" has the power to create a consumer stampede. In other words, FREE caused people to choose an inferior product more than they would have if the prices were both positive. Thus, in a world where there is more FREE stuff, the quality of stuff will decline. It's hard to believe that this needs to be pointed out. And again, this is not the same as prices declining because technology has become more efficient -- prices are still above 0 in that case. FREE lives in a world of its own. If you're only trying to get people to buy your target product by packaging it with a FREE trinket, then that's fine. You're still selling something, but just drawing the customer in with FREE stuff. This jibes with another behavioral economics finding -- that when two items A and B are similar to each other but very different from item C, all lying on the same utility curve, people ignore C because it's hard to compare it to the altneratives. They end up hyper-comparing A and B since their features are so similar, and whichever one is marginally better wins. So if you have three more or less equally useful products, A B and C, where B is essentially what A is, just with something FREE thrown in, people find it a no-brainer to choose B. An exception to the rule of "FREE leads to lower quality" might be the products that result from dick-swinging competitions, where the producer will churn out lots of FREE stuff just to show how great they are at what they do. They're concerned more with reputation than getting by. Academic work could be an example -- lots of nerds post and critique scientific work at arXiv, PLoS, as well as the more quantitatively oriented blogs. But in general, you can imagine the quality level you'd enjoy from a free car or an all-volunteer police force. Even sticking with just information, per Chris Anderson, look at what movies you can download without cost on a peer-to-peer site or whatever -- they mostly all suck, being limited to the library of DVDs that geeks own. Sign up for NetFlix or a similar service, and you have access to a superior library of movies, and it hardly costs you anything -- it's just not FREE. Ditto for music files you can download cost-free from a P2P site vs. iTunes, or even buying the actual CD used from Amazon or eBay. Admittedly I don't know much about computer security, but just by extending the analogy of a voluntary police force, I'd wager that security software that costs anything is better than FREE or open source security software. To summarize, though, Gladwell's discussion about FREE misses the most important part -- it tends to lower quality. I don't want to live in a word of lower quality of items that aren't of major consequence, and (hopefully) the people in charge of high-consequence items like the police and my workplace's computer security will never be persuaded to go for FREE crap in the first place. This aspect alone answers the question he poses in the sub-headline, "Is free the future?" However, wrapping your brain around the idea that FREE tends to lower quality is discordant with a Progressive worldview, which explains why Gladwell just doesn't get it. Labels: Behavioral Economics, Economics, Media, Technology

Thursday, June 25, 2009

Updated

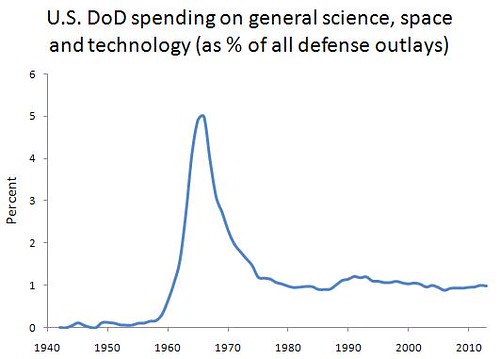

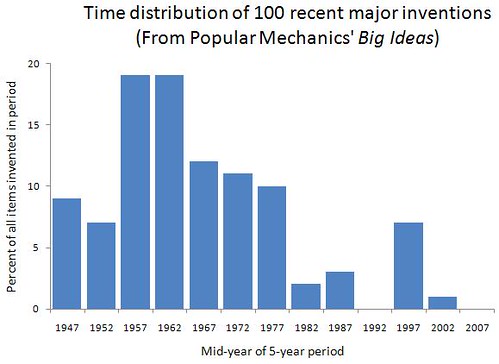

This may be old hat for some readers, but it's worth reviewing and providing some good new data for. The motivation is the idea that monopoly-haters have that when some company comes to dominate the market, they will have no incentive to change things -- after all, they've already captured most of the audience. The response is that industries where invention is part of the companies' raison d'etre attract dynamic people, including the executives. And such people do not rest on their laurels once they're free from competition -- on the contrary, they exclaim, "FINALLY, we can breathe free and get around to all those weird projects we'd thought of, and not have to pander to the lowest common denominator just to stay afloat!" Of course, only some of those high-risk projects will become the next big thing, but a large number of trials is required to find highly improbable things. When companies are fighting each other tooth-and-nail, a single bad decision could sink them for good, which makes companies in highly competitive situations much more risk-averse. Conversely, when you control the market, you can make all sorts of investments that go nowhere and still survive -- and it is this large number of attempts that boosts the expected number of successes. With that said, let's review just a little bit of history impressionistically, and then turn to a new dataset that confirms the qualitative picture. Taking only a whirlwind tour through the pre-Information Age time period, we'll just note that most major inventions could not have been born if the inventor had not been protected from competitive market forces -- usually from protection by a monopolistic and rich political entity. Royal patronage is one example. And before the education bubble, there weren't very many large research universitities in your country where you could carry out research -- for example, Oxford, Cambridge, and... well, that's about it, stretching back 900 years. They don't call it "the Ivory Tower" for nothing. Looking a bit more at recent history, which is most relevant to any present debate we may have about the pros and cons of monopolies, just check out the Wikipedia article on Bell Labs, the research giant of AT&T that many considered the true Ivory Tower during its hey-day from roughly the 1940s through the early 1980s. From theoretical milestones such as the invention of information theory and cryptography, to concrete things like transistors, lasers, and cell phones, they invented the bulk of all the really cool shit since WWII. They were sued for antitrust violations in 1974, lost in 1982, and were broken up by 1984 or '85. Notice that since then, not much has come out -- not just from Bell Labs, but at all. The same holds true for the Department of Defense, which invented the modern airliner and the internet, although they made large theoretical contributions too. For instance, the groundwork for information criteria -- one of the biggest ideas to arise in modern statistics, which tries to measure the discrepancy between our scientific models and reality -- was laid by two mathematicians working for the National Security Agency (Kullback and Leibler). And despite all the crowing you hear about the Military-Industrial Complex, only a pathetic amount actually goes to defense (which includes R&D) -- most goes to human resources, AKA bureaucracy. Moreover, this trend goes back at least to the late 1960s. Here is a graph of how much of the defense outlays go to defense vs. human resources (from here, Table 3.1; 2008 and beyond are estimates):  There are artificial peaks during WWII and the Korean War, although it doesn't decay very much during the 1950s and '60s, the height of the Cold War and Vietnam War. Since roughly 1968, though, the chunk going to actual defense has plummeted pretty steadily. This downsizing of the state began long before Thatcher and Reagan were elected -- apparently, they were jumping on a bandwagon that had already gained plenty of momentum. The key point is that the state began to give up its quasi-monopolistic role in doling out R&D dollars. Update: I forgot! There is a finer-grained category called "General science, space, and technology," which is probably the R&D that we care most about for the present purposes. Here is a graph of the percent of all Defense outlays that went to this category:  This picture is even clearer than that of overall defense spending. There's a surge from the late 1950s up to 1966, a sharp drop until 1975, and a fairly steady level from then until now. This doesn't alter the picture much, but removes some of the non-science-related noise from the signal. [End of update] Putting together these two major sources of innovation -- Bell Labs and the U.S. Defense Department -- if our hypothesis is right, we should expect lots of major inventions during the 1950s and '60s, even a decent amount during the 1940s and the 1970s, but virtually squat from the mid-1980s to the present. This reflects the time periods when they were more monopolistic vs. heavily downsized. What data can we use to test this? Popular Mechanics just released a neat little book called Big Ideas: 100 Modern Inventions That Have Changed Our World. They include roughly 10 items in each of 10 categories: computers, leisure, communication, biology, convenience, medicine, transportation, building / manufacturing, household, and scientific research. They were arrived at by a group of around 20 people working at museums and universities. You can always quibble with these lists, but the really obvious entries are unlikely to get left out. There is no larger commentary in the book -- just a narrow description of how each invention came to be -- so it was not conceived with any particular hypothesis about invention in mind. They begin with the transistor in 1947 and go up to the present. Pooling inventions across all categories, here is a graph of when these 100 big ideas were invented (using 5-year intervals):  What do you know? It's exactly what we'd expected. The only outliers are the late-1990s data-points. But most of these seemed to be to reflect the authors' grasping at straws to find anything in the past quarter-century worth mentioning. For example, they already included Sony's Walkman (1979), but they also included the MP3 player (late 1990s) -- leaving out Sony's Discman (1984), an earlier portable player of digitally stored music. And remember, each category only gets about 10 entries to cover 60 years. Also, portable e-mail gets an entry, even though they already include "regular" e-mail. And I don't know what Prozac (1995) is doing in the list of breakthroughs in medicine. Plus they included the hybrid electric car (1997) -- it's not even fully electric! Still, some of the recent ones are deserved, such as cloning a sheep and sequencing the human genome. Overall, though, the pattern is pretty clear -- we haven't invented jackshit for the past 30 years. With the two main monopolistic Ivory Towers torn down -- one private and one public -- it's no surprise to see innovation at a historic low. Indeed, the last entries in the building / manufacturing and household categories date back to 1969 and 1974, respectively. On the plus side, Microsoft and Google are pretty monopolistic, and they've been delivering cool new stuff at low cost (often for free -- and good free, not "home brew" free). But they're nowhere near as large as Bell Labs or the DoD was back in the good ol' days. I'm sure that once our elected leaders reflect on the reality of invention, they'll do the right thing and pump more funds into ballooning the state, as well as encouraging Microsoft, Google, and Verizon to merge into the next incarnation of monopoly-era AT&T. Maybe then we'll get those fly-to-the-moon cars that we've been expecting for so long. I mean goddamn, it's almost 2015 and we still don't have a hoverboard. Labels: Economics, History, politics, Technology

Tuesday, June 02, 2009

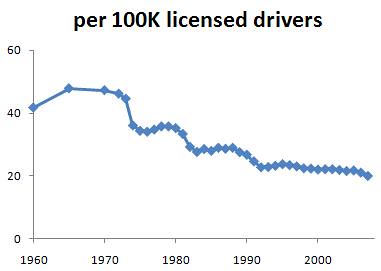

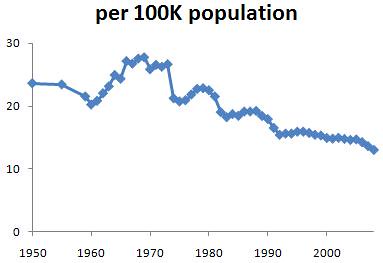

Click the tab below the body of this post to read previous entries in the series about how previous generations were more depraved. One way to look at how civilized we are is to see how we behave in situations where our conduct can mean the difference between life and death for those around us -- for example, when we drive our car. Traffic deaths, of course, reflect properties of the car as much as the people involved, but teasing the two apart turns out to be pretty simple in this case.

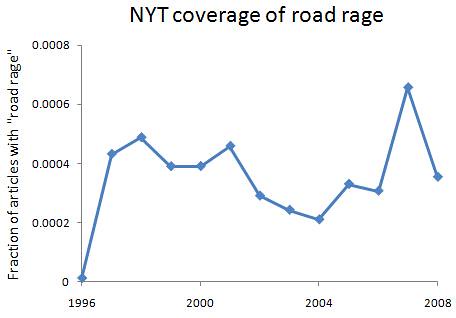

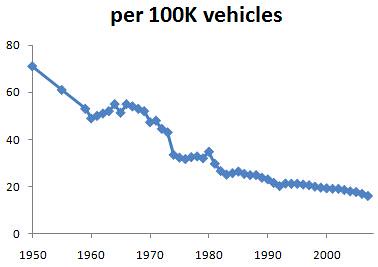

In my brief review of Daniel Gardner's book The Science of Fear, I gave a few examples of how media coverage of some threat was outta-whack with the underlying risk, namely homicide and rape. Gardner spends a few pages talking about the epidemic of "road rage" that was allegedly sweeping across the country not too long ago, so why don't we have a look at what the data really say about when road rage may have been greater than usual. First, here's a quick view of media coverage of "road rage," which begins in 1996:  What about actual traffic deaths, though? The data come from the National Safety Council, as recorded across several versions of the Statistical Abstract of the United States -- which, btw, is much cooler than the General Social Survey or the World Values Survey if you want to waste some time crunching numbers. I tracked the data back as far as they exist in the Stat Ab, and they include four ways of measuring traffic death rates. Here are the graphs:     The first is the most instructive -- it measures the number of deaths compared to the number of vehicles on the road and how long they're on the road. The other three measure deaths compared to some population size -- of vehicles, of drivers, or of the whole country -- but even if population size is constant, we expect more deaths if people drive a lot more. The distinction isn't so crucial here since the graphs look the same, but I'll refer to the first one because what it measures is more informative. From 1950 to 2008, there's a simple exponential decline in traffic deaths (r^2 = 0.97). As roads are made safer, as all sorts of car parts are made to boost safety, and as people become more familiar with traffic, we expect deaths to decline, and that's just what we see. I looked at earlier versions of the Stat Ab, and there is a similar exponential decline in railroad-related deaths from 1920 to 1959 -- again, probably due to improving the technology of railroads, trains, and so on. However, aside from the steady decline that we expect from safer machinery, there is a clear bulge away from the trend during the years 1961 - 1973. Although I haven't researched it, it seems impossible for roads to have went to shit during that time but not during the other times, or that cars made then were even less safe than the ones made before or after. The obvious answer is that people were just more reckless and/or hostile toward their fellow man in that period. There's no other huge departure from the trend, so if any time period has been characterized by "road rage," it was The Sixties (which lasted until 1973 or '74). Consider the age group whose brains are most hijacked by hormones, and maybe by drugs and alcohol too -- say, 15 to 21 year-olds. The oldest members of this group who were driving during the road rage peak were born in 1940, while the youngest ones were born in 1958. Hmmm, born from 1940 to 1958 -- Baby Boomers. (Those born after 1957 - 8, and before 1964, are not cultural Boomers.) And this doesn't seem to be an effect of lots more teenagers on the road than at other times -- there have been echo booms afterward and yet no big swings away from the trend. When the media and everyone hooked in to the media began talking about road rage 10 to 15 years ago, there was nothing new in the traffic death story -- indeed, the rate was continuing its decades-long decline. If you just want to know what is going on right now, the media may not be so bad at giving you that info. But this serves as yet another lesson to not believe anything they say, or imply, about trends unless there is a clear graph backing them up (or, in a pinch, a handful of data-points sprinkled throughout the prose). I located, collected, analyzed, and wrote up all of the relevant data -- stretching back nearly 60 years -- in less than one day, and only using the internet and Excel. This shows us again that journalists are either too clueless, too lazy, or too stupid to figure anything out. Labels: culture, Media, previous generations were more depraved, Technology

Monday, June 01, 2009

Microsoft's new search engine, Bing, is live. And yeah, I think it's kind of a Google clone. Where's the differentiation? Then again, Microsoft did have a history of coming out with crappy clones and slowly perfecting and overtaking. WordPerfect, Lotus and Netscape. But the is not always prediction, and Microsoft had some advantages against those companies which it does not in the "Web 2.0" domain. Microsoft's new search engine, Bing, is live. And yeah, I think it's kind of a Google clone. Where's the differentiation? Then again, Microsoft did have a history of coming out with crappy clones and slowly perfecting and overtaking. WordPerfect, Lotus and Netscape. But the is not always prediction, and Microsoft had some advantages against those companies which it does not in the "Web 2.0" domain.Labels: Technology

Tuesday, May 19, 2009

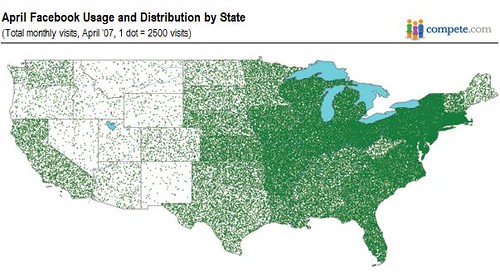

Since most people use online social networks like Facebook to keep in touch with people who they interact with in real life, it doesn't make sense to sign up for a Facebook account unless others in your area have already. This predicts that we should see a spreading out of Facebook from its founding location, just like a contagious disease rolling out from Typhoid Mary's neighborhood. Let's take a look at the data and see.

First, I found this map from Google Images of the number of Facebook visits by state:  Unfortunately, these are not per capita rates. But you can still tell that the Northeast has a whole hell of a lot of activity, while super-populated California shows little. Luckily, Facebook calculated the number of adult users in each state, and divided this by the state's entire adult population size to get the prevalence of Facebook among adults by state. The data are here, and I've made a bubble map of them here. Note that the pattern is pretty similar, even though these are now per capita rates. It looks as though Facebook is spreading from the Northeast, so one easy way to quantify the pattern is to plot the prevalence of Facebook among adults as a function of distance from the original physical site -- Harvard, in this case. (I used the zip code of a state's largest city and that of Harvard to calculate distance.) Here is the result:  Close to Harvard, prevalence is high, and it declines pretty steadily as you branch out from there. The Spearman rank correlation between Facebook prevalence and distance from Harvard is -0.58 (p less than 10^-6). If Facebook were being used to talk anonymously to a bunch of strangers, as with the early AOL chatrooms, then the adoption of this technology wouldn't show such a strong geographical pattern -- who cares if no one else in your state uses a chatroom, as long as there are enough people in total? This shows how firmly grounded in people's real lives their use of Facebook is; otherwise it would not spread in a more or less person-to-person fashion from its founding location. It's not that there aren't still chatrooms -- it's just that, to normal people, they're gay, at least compared to Facebook. Few would prefer joining a cyberworld for their social interaction -- using the internet to slightly enhance what they've already got going in real life is exciting enough. The only exceptions are cases where you have no place to congregate in real life with your partners, such as a group of young guys who want to play video games. Arcades started to vanish around 1988, so that now they must plug in to the internet and play each other online. For the most part, though, the internet isn't going to radically change how we conduct our social lives. Labels: culture, geography, Technology, web

Sunday, August 12, 2007

Facebook Grows Up:

What does Facebook get from this? If all goes well, much of what people do on the Internet will be accomplished within Facebook. Instead of eBay, you can buy in Facebook's marketplace. Instead of iTunes, there's iLike. In other words, Zuckerberg wants to keep you-student, graduate or graybeard-logged on to Facebook, organizing virtually everything you do via the social graph. AOL, PointCast, the portal sites. I think the past 10 years has shown that you better focus on what you can do well before doing everything. Labels: Technology

Saturday, June 09, 2007

Recently I resubscribed to the Safari online tech library (a lot of O'Reilly titles, but not exclusively) after a hiatus of a few years. Previously their system was basically like Netflix, you could put some items on your bookshelf contingent upon your subscription level. Now they have a $40/month level where you have full access to their whole library! Of course, there's really nothing in there that you couldn't find through clever google searches, but if time is $ it really does make it a lot simpler to have it all in one place.

Labels: Technology

Tuesday, April 03, 2007

Returning to a favorite theme here -- debunking the balderdash that recent human evolution is cultural rather than biological -- consider how simple technological changes can influence human biological evolution. Take musical instruments: in an environment with no musical instruments, and thus essentially no music, you'd never know who were the rockstars (if male) or the dancing queens (if female). With no way to detect these sexy phenotypes, natural selection could not change the frequencies of alleles that contributed to them. But once the presence of musical instruments becomes a predictable feature of the environment, suddenly there's a pressure to be a good performer, and so traits both physical (dexterity, agility) and psychological (extraversion, emotional volatility) will increase, at least up to a point where any further increase would be a bad bet for newcomers as they crowd an already saturated niche. It's hard to show off when everyone else shows off in the same way.

Now, we commonly urge youngsters to "find their niche," yet many people ignore the obvious corollary of this ecological phrase, namely that whatever cultural processes spawn new niches will also result in a change in frequency of alleles implicated in the traits needed to thrive therein. Unlike Darwin's finches, humans don't need to expand into an unsettled archipelago to undergo adaptive radiation -- we can stay fixed geographically but broaden the range of niches in our "social-cultural space." At my personal blog, I sketched out a reason for why technological progress tends to be more bustling than progress in more abstract disciplines like geometry, where progress appears to stagnate for quite awhile until "the next big thing" comes along. Basically, the purer arts and sciences are the hobbies of weirdos, whereas technology is usually a matter of life and death: i.e., outperforming the technology of your adversaries. This literal arms race keeps the pace of technological progress much more frenzied than in other cultural areas. The key is that new shields, spears, guns, and ships don't affect the fitness of just soldiers, because most of this new stuff will be ripped off by others to innovate civilian life. For instance, there would be no common cars if militaries had not pioneered the technology of interchangeable parts and mass assembly-line production for ships and firearms. Nor could their interiors and exteriors be held together were it not for the common use of steel, an alloy whose first modern production method -- the Bessemer Process -- resulted from its inventor's efforts to more efficiently produce firearms for the Crimean War, and whose Captain of Industry (Andrew Carnegie) made his fortune through contracts to build warships for the US Navy. And since the widespread availability of the automobile, many males have carved out a niche whose appeal to females centers around owning a car when other males don't (the guy in 10th grade with his own car) or using their car to signal machismo (drag racers). So, to paraphrase a related slogan on technological changes fueling biological changes: howitzers hatched heart-throbs in hot rods. Labels: babes and hunks, maintenance of variation, Technology

Saturday, February 24, 2007

According to the editors of Nature.

[W]hat has been universally deemed as unacceptable is the pursuit of human reproductive cloning - or the production of what some have called a delayed identical twin. Here, the two issues that have dominated the discussion have been dignity and safety. There is a consensus that dignity is not undermined if a human offspring is valued in its own right and not merely as a means to an end. But there is no consensus that we will eventually know enough about cloning for the risks of creating human clones to be so small as to be ethically acceptable. Labels: Cloning, Technology |