|

Tuesday, March 31, 2009

Genes may time loss of virginity:

As genetic determinism goes, the new findings are modest. Segal's team found that genes explain a third of the differences in participants' age at first intercourse - which was, on average, a little over 19 years old. By comparison, roughly 80% of variations in height across a population can be explained by genes alone. The paper is Age at first intercourse in twins reared apart: Genetic influence and life history events. FuturePundit notes: The team found a weaker effect from genes with people born before 1948. This supports an argument I've made here previously: the breakdown of old cultural constraints on behavior frees up people to follow genetically driven desires and impulses. We become more genetically driven as external constraints weaken. When you remove the strength of environmental parameters from the equation it naturally results in a greater salience of heritable ones. Ergo, the logic whereby you can make the case that in a perfect meritocracy there will be much stronger genetic sorting by class (via assortative mating, etc.). Related: DRD4 and virginity. Labels: Genetics, Personality

In A Farewell to Alms, Gregory Clark provides data on interest rates to show that Europeans gradually developed lower time preferences. In other words, they were more likely to delay gratification and plan for the future -- paying back loans, for example. He also interprets data on wills as showing that most people of English descent today are the genetic legacy of the middle class, the poor and the aristocracy mostly having failed to reproduce themselves. That leaves us with a society where the average person maximizes their long-term material welfare much better than their counterparts would have in the Middle Ages or before. There appears to be somewhat of a drawback, though: doing so makes you more miserable over the long term.

John Tierney recently reviewed a series of studies on how the intensity of guilt and regret change over time. Read the most recent article for free here, which contains five related studies. The journal article and Tierney's write-up are brief and straightforward, so I own't belabor the details here. Basically, in the short term, indulgence-driven guilt stings more than prudence-driven regret, and this motivates us toward virtuous behavior, such as delaying material gratification. In the long term, though, guilt has faded away and regret over missing out on life's pleasures weighs more heavily on our mind. Oddly, then, maximizing long-term material well-being minimizes long-term hedonic well-being. If the big shift to low time preferences was as recent as Clark suggests -- during the Modern and especially Industrial period -- then perhaps our brain's pleasure or reward system hasn't had enough time to rewire itself to make us feel warm and fuzzy about having saved, abstained, and done the prudent thing in the past. Rather, since all other human groups before the big change, and certainly other primate groups, had very high time preferences, the reward system is probably designed to make us feel happy as we pour over a mental photo album that's stuffed with memories of irresponsible fun and indulgence. Hey, no one ever said that changing the world and getting shit done was going to be emotionally uplifting. I'd like to see follow-up studies focus on individual differences in how strongly they are motivated by guilt vs. regret. Most personality questionnaires measure something called excitement seeking or novelty seeking, as well as impulsiveness. We might predict that impulsive and excitement-seeking people are more motivated by avoiding regret than avoiding guilt, which leads them toward indulging more in the present. You could re-do all of the five studies in the article above, but using personality traits as predictor variables. If different parts of the brain light up when we feel guilt vs. regret, you could see if impulsive and excitement-seeking people showed greater responses to regret-based scenarios than guilt-based scenarios. (E.g., they read a story about someone else feeling these emotions, they reflect on an episode from their own lives, they see pictures of the faces of others expressing these emotions, and so on.) On an applied level, if you suffer from "hyperopia" -- planning to much for your material future -- you can push yourself to indulge merely by reflecting on how you may in 20 years regret missing out on having fun now. If you remind yourself that "You'll regret it if you don't," then you won't find yourself sighing later on about that more exciting trip you should have taken your son on, that year of working in a more fulfilling city for less pay, or that student who made a pass at you that you should have slept with. Labels: Economics, Evolutionary Psychology, Psychology

Monday, March 30, 2009

A quick link to Jerry Coyne's blog in support of his new book, Why Evolution is True. There are some nice posts--see for example this one on the evolution of pygmies.

Connections between Mendelian diseases and natural variation

posted by

p-ter @ 3/30/2009 07:49:00 PM

I've written before about a pattern emerging from genome-wide association studies--genes in which mutations cause rare extreme forms of a phenotype often harbor common variation that influence natural, non-disease variation in that same phenotype. A pair of new studies on variation in cardiac repolarization (summarized here) provide an additional example of this pattern.

It's worth noting that this was something of an obvious hypothesis--candidate gene association studies often targeted gene known to cause Mendelian disorders when mutated. In retrospect, the reason these studies were often inconclusive was a simple lack of power. Labels: Genetics

Steve brings up the fact that there is a trend in Indian culture toward memorizing stuff as a way of showing off one's intellect. This seems plausible. But, I think a bigger point might be that rote learning and feats of memory have traditionally been more important in human history than they are now, and Western societies in particular are on the cutting edge on placing more of an emphasis on creative original thinking which illustrates the ability to reconstitute concepts into a novel synthesis as opposed to regurgitating ancient forms. In fact before the printing press made books much more common there was a whole field termed the Art of Memory in the West.

Note: I would also add that memory has different utility in different fields. Physicists who I've known seem to be rather slack about memorization, but then their field is one where theory is robust enough to generate useful inferences. In some ways the rise of mathematical science is the story of the decline of memory. Labels: intellectual history

Friday, March 27, 2009

Follow up to the post below, Jake Young at Pure Pedantry has a thorough review.

Labels: IQ, Neuroscience

Thursday, March 26, 2009

Adolescent Leadership and Adulthood Fertility: Revisiting the "Central Theoretical Problem of Human Sociobiology":

Human motivation for social status may reflect an evolved psychological adaptation that increased individual reproductive success in the evolutionary past. However, the association between status striving and reproduction in contemporary humans is unclear. It may be hypothesized that personality traits related to status achievement increase fertility even if modern indicators of socioeconomic status do not. We examined whether four subcomponents of type-A personality-leadership, hard-driving, eagerness, and aggressiveness—assessed at the age of 12 to 21 years predicted the likelihood of having children by the age of 39 in a population-based sample of Finnish women and men (N=1,313). Survival analyses indicated that high adolescent leadership increased adulthood fertility in men and women, independently of education level and urbanicity of residence. The findings suggest that personality determinants of status achievement may predict increased reproductive success in contemporary humans. In Finland a "Type-A Personality" presumably refers to someone willing to make eye contact with family members. In any case I think this table is probably the most informative:  The main caveat which is stated in the paper is that we're talking about Finland today. How generalizable is this? If leadership was a primary factor behind reproductive success over long periods of time how come we're not all Type A personalities? I think it seems likely that the fitness of these individuals and their morph exhibits frequency dependence. Additionally the longer term volatility of this strategy probably differs from more retiring personal profiles. The Type A strategy seems more likely to be subject to winner-take-all dynamics; there were many prominent leaders on the Mongolian plain of 1250. Very few of them have descendants due to the fact that one Type A eliminated all the rest. In Farewell to Alms Greg Clark reports data which illustrate that before the 19th century the blooded military nobility might have had below average replacement because of morality during war. In contrast, the gentry were fertile. Not to nerd out, but this shows that the Hobbit strategy can beat the Numenorean over the long term. Modern post-industrial societies have a particular social ecology, and are subject to a dynamic contingent upon that ecology. Let's not overgeneralize. The main caveat which is stated in the paper is that we're talking about Finland today. How generalizable is this? If leadership was a primary factor behind reproductive success over long periods of time how come we're not all Type A personalities? I think it seems likely that the fitness of these individuals and their morph exhibits frequency dependence. Additionally the longer term volatility of this strategy probably differs from more retiring personal profiles. The Type A strategy seems more likely to be subject to winner-take-all dynamics; there were many prominent leaders on the Mongolian plain of 1250. Very few of them have descendants due to the fact that one Type A eliminated all the rest. In Farewell to Alms Greg Clark reports data which illustrate that before the 19th century the blooded military nobility might have had below average replacement because of morality during war. In contrast, the gentry were fertile. Not to nerd out, but this shows that the Hobbit strategy can beat the Numenorean over the long term. Modern post-industrial societies have a particular social ecology, and are subject to a dynamic contingent upon that ecology. Let's not overgeneralize.Labels: Evolutionary Psychology, Finn baiting, Personality

Positive association between cognitive ability and cortical thickness in a representative US sample of healthy 6 to 18 year-olds:

Neuroimaging studies, using various modalities, have evidenced a link between the general intelligence factor (g) and regional brain function and structure in several multimodal association areas. While in the last few years, developments in computational neuroanatomy have made possible the in vivo quantification of cortical thickness, the relationship between cortical thickness and psychometric intelligence has been little studied. Recently, cortical thickness estimations have been improved by the use of an iterative hemisphere-specific template registration algorithm which provides a better between-subject alignment of brain surfaces. Using this improvement, we aimed to further characterize brain regions where cortical thickness was associated with cognitive ability differences and to test the hypothesis that these regions are mostly located in multimodal association areas. We report associations between a general cognitive ability factor (as an estimate of g) derived from the four subtests of the Wechsler Abbreviated Scale of Intelligence and cortical thickness adjusted for age, gender, and scanner in a large sample of healthy children and adolescents (ages 6–18, n = 216) representative of the US population. Significant positive associations were evidenced between the cognitive ability factor and cortical thickness in most multimodal association areas. Results are consistent with a distributed model of intelligence. See ScienceDaily. Labels: IQ

Wednesday, March 25, 2009

You probably know that lions were native to Greece 2,000 years ago (ergo, the Lion Gate). But more importantly I just realized today how important it might be that the rats we know of as rats are relative newcomers to Western Eurasia (above & beyond their specific relevance to plague). The black rat for example seems to have arrived in the Mediterranean just as lions were going extinct, during the days of the Roman Empire. But today the black rat is rare in Europe (generally found in port cities) and has been replaced by the brown rat, which only arrived in the early modern period (e.g., 18th century in Britain). So check out Rats, Communications, and Plague: Toward an Ecological History.

Labels: History

I'm going to leave this without comment, The Crash-Test Solution. Subhead: "we now know that few people saw the downturn coming. Scientists are working to make sure that never happens again."

Labels: Finance

Tuesday, March 24, 2009

Signals of recent positive selection in a worldwide sample of human populations...again, sort of

posted by

Razib @ 3/24/2009 10:46:00 AM

New paper in Genome Research, Signals of recent positive selection in a worldwide sample of human populations:

Genome-wide scans for recent positive selection in humans have yielded insight into the mechanisms underlying the extensive phenotypic diversity in our species, but have focused on a limited number of populations. Here, we present an analysis of recent selection in a global sample of 53 populations, using genotype data from the Human Genome Diversity-CEPH Panel. We refine the geographic distributions of known selective sweeps, and find extensive overlap between these distributions for populations in the same continental region but limited overlap between populations outside these groupings. We present several examples of previously unrecognized candidate targets of selection, including signals at a number of genes in the NRG-ERBB4 developmental pathway in non-African populations. Analysis of recently identified genes involved in complex diseases suggests that there has been selection on loci involved in susceptibility to type II diabetes. Finally, we search for local adaptation between geographically close populations, and highlight several examples. I've blogged it at ScienceBlogs, and so has Genetic Future, and John Hawks offers a response. Though there are so many references to the Supplements, which aren't online, I feel like there's on more course remaining.... Labels: Genetics, Population genetics

Monday, March 23, 2009

Transcriptional neoteny in the human brain:

In development, timing is of the utmost importance, and the timing of developmental processes often changes as organisms evolve. In human evolution, developmental retardation, or neoteny, has been proposed as a possible mechanism that contributed to the rise of many human-specific features, including an increase in brain size and the emergence of human-specific cognitive traits. We analyzed mRNA expression in the prefrontal cortex of humans, chimpanzees, and rhesus macaques to determine whether human-specific neotenic changes are present at the gene expression level. We show that the brain transcriptome is dramatically remodeled during postnatal development and that developmental changes in the human brain are indeed delayed relative to other primates. This delay is not uniform across the human transcriptome but affects a specific subset of genes that play a potential role in neural development. Here are the 4 classes of gene expression trajectories they're focusing on:

They found that there was a relative enrichment of genes which exhibited human neoteny, with delayed expression: Finally: We analyzed the genes affected by the neotenic shift in the human prefrontal cortex with respect to their histological location, function, regulation, and expression timing. First, with respect to their histological location, we used published gene expression data from human gray and white matter...and found that, in both brain regions, human neotenic genes are significantly overrepresented among genes expressed specifically in gray matter...but not among genes expressed in white matter.... Labels: Cognitive Science, Neuroscience

Saturday, March 21, 2009

Comments open

Note from Razib: I haven't watched BSG since the first few episodes. Please be careful about your first few words in your comments as I have to moderate and will also see them on the right side under recent comments. I plan to watch the whole series on DVD over a weekend at some point in the future when I have time. Thanks. Labels: battlestar galactica

Thursday, March 19, 2009

Tracking economists' consensus on money illusion, as a proxy for Keynesianism

posted by

agnostic @ 3/19/2009 02:07:00 PM

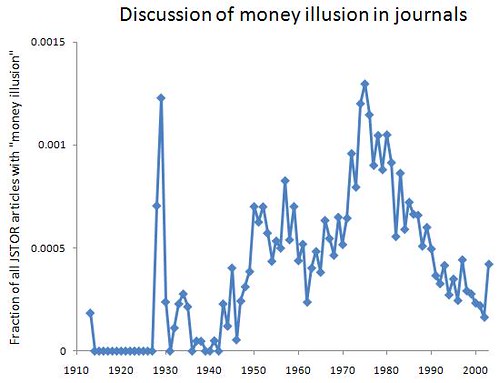

I'm probably not the only person playing catch-up on economics in order to get a better sense of what the hell is going on. Just two economists clearly called the housing bubble and predicted the financial crisis, and only one of them has several books out on the topic -- Robert Shiller, the other being Nouriel Roubini. With Nobel Prize winner George Akerlof, Shiller recently co-authored Animal Spirits, a popular audience book making the case that human psychology and behavioral biases need to be taken into account when explaining any aspect of the economy, especially when things get all fucked up. That argument would seem superflous, but economics is the butt of "assume a can-opener" jokes for a reason.

To be fair to the field, though, they point out that before roughly the 1970s, mainstream economists all believed that the foibles of human beings, as they really exist, should be incorporated into theory, rather than dismissing them as behaviors that only an irrational dupe would show. Remember that Adam Smith was a professor of moral philosophy and wrote extensively about human psychology. From what I can tell, the recent shift does not have to do with the introduction of math nerds into the field, since the man who laid most of the formal foundation -- Paul Samuelson -- was squarely in the "psychology counts" camp. It looks more like a subset of math nerds is responsible -- call them contemptuous autists, as in "What kind of idiot would engineer the brain that way?!" (Again, these are very rough impressions, and I'm winging it in categorizing people.) Of the many ideas relevant to understanding financial crises, a key one from the old school period is money illusion, or the idea that people think in terms of nominal rather than real prices. For example, if the nominal prices of things you buy go down by 20%, you won't be any better or worse off in real terms if your nominal wages also go down by 20%. However, most people don't think this way, and would see a 20% pay-cut in this context as a slap in the face, a breach of unspoken rules of fairness. This is an illusion because a dollar (or euro, or whatever) isn't a fixed unit of stuff -- what it measures changes with inflation or deflation. It's the same reason that women's clothing designers use fuzzy units of measurement -- "sizes" -- rather than units that we agree to fix forever, such as inches or centimeters. By artificially deflating the spectrum of sizes, a woman who used to wear a size 10 now wears a size 6, and she feels much better about herself, even if she has stayed the same objective size or perhaps even gotten fatter. How could they be so stupid to fall for this, when everyone knows it's a trick? Who knows, but they do. Similarly, everyone knows that inflation of prices exists, and yet the average person still falls victim to money illusion, and economic theory will just have to work that in, just as evolutionary theory must work in the presence of vestigial organs, sub-optimally designed parts, and other things that make engineers' toes curl. Animal Spirits provides an overview of the empirical research on this topic, and it looks like there's convincing evidence that people really do think this way. To take just one line of evidence, wages appear to be very resistant to moving downward, even when all sorts of other prices are declining, and interviews and surveys of employers reveal that they are afraid that wage cuts will demoralize or otherwise antagonize their employees. This is obviously a huge obstacle during an economic crisis, since firms will find it tough to hemorrhage less wealth by lowering wages -- even only by lowering them enough to match the now lower cost-of-living. The way Akerlof and Shiller present the history of the idea, it was mainstream before Milton Friedman and like-minded economists tore it down starting around 1967 and culminating by the end of the 1970s, although they hint that the idea may be seeing a rebirth. As an outsider, my first question is -- "is that true?" I searched JSTOR for "money illusion" and plotted over time the fraction of all articles in JSTOR that contain this term:  Although the term was coined earlier, the first appearance in JSTOR is a 1913 article by Irving Fisher, and the surge around 1928 - 1929 is due to commentary on his book titled Money Illusion. Academics were still talking about it somewhat through 1934, probably because the worst phase of the Great Depression spurred them to try to figure out what went wrong. The idea becomes more discussed during World War II, and especially afterwards when Keynesian thought swept throughout the academic and policy worlds within the developed countries. In the mid-'50s, the term decelerates and then declines in usage, although the policies of its believers are still in full swing. I interpret this as showing that from the end of WWII to the mid-'50s, their ideas were debated more and more, and after this point they considered the matter settled. Starting in the mid-late-1960s, though, the term begins to surge in usage to even greater heights than before, peaking in 1975, and plummeting afterward. This of course parallels the questioning of many of the ideas taken for granted during the Golden Age of American Capitalism, and the transition to Friedman-inspired thinking in academia and Thatcher-inspired thinking in public policy. Party affiliation clearly does not matter, since the mid-'40s to mid-'60s phase showed bipartisan support for Keynesian thinking, and after the mid-'70s there was also a bipartisan consensus on theory and policy applications. I interpret this second rise and fall as a re-ignited debate that was then considered a resolved matter -- only this time with the opposite conclusion as before, i.e. that "everyone knows" now that money illusion is irrational and therefore doesn't exist. The data end in 2003, since there's typically a five-year lag between the publication date of an article and its appearance in JSTOR. So, unfortunately I can't use this method to confirm or disconfirm Akerlof and Shiller's hints that the idea might be on its way to becoming mainstream in the near future. Whatever the empirical status of money illusion turns out to be -- and it does look like it's real -- the bigger question is whether or not economists will return to a serious, empirical consideration of psychology -- both the universal features (however seemingly irrational), as well as the individual differences that allow Milton Friedman to easily work through a 10-step-long chain of backwards induction, but not a typical working class person, who isn't smart enough to get into college (and these days, that's saying a lot). If all the positive press, not to mention book deals, that Shiller is getting are any sign, the forecast looks optimistic. Labels: Economics, intellectual history, politics, Psychology

Wednesday, March 18, 2009

It was only six short years ago that Greg Barsh wrote an "unsolved mystery" review in PLoS Biology asking, "What Controls Variation in Human Skin Color?"

A recent review provides a nice summary of the developments since then--in short, pigmentation is now probably one of the best understood (at a genetic level) phenotypes in humans. A pretty impressive story. Labels: Genetics

Tuesday, March 17, 2009

Readers of this weblog from back in 2002 know that we used to point to Paul Thompson's research. So see this, Genetics of Brain Fiber Architecture and Intellectual Performance:

The study is the first to analyze genetic and environmental factors that affect brain fiber architecture and its genetic linkage with cognitive function. We assessed white matter integrity voxelwise using diffusion tensor imaging at high magnetic field (4 Tesla), in 92 identical and fraternal twins. White matter integrity, quantified using fractional anisotropy (FA), was used to fit structural equation models (SEM) at each point in the brain, generating three-dimensional maps of heritability. We visualized the anatomical profile of correlations between white matter integrity and full-scale, verbal, and performance intelligence quotients (FIQ, VIQ, and PIQ). White matter integrity (FA) was under strong genetic control and was highly heritable in bilateral frontal....bilateral parietal...and left occipital...lobes, and was correlated with FIQ and PIQ in the cingulum, optic radiations, superior fronto-occipital fasciculus, internal capsule, callosal isthmus, and the corona radiata...for PIQ, corrected for multiple comparisons). In a cross-trait mapping approach, common genetic factors mediated the correlation between IQ and white matter integrity, suggesting a common physiological mechanism for both, and common genetic determination. These genetic brain maps reveal heritable aspects of white matter integrity and should expedite the discovery of single-nucleotide polymorphisms affecting fiber connectivity and cognition. Here's the summary at ScienceDaily. Labels: IQ, Neuroscience  A number of people have commented on a recent paper showing an increase in heterozygosity in human populations over time, presumably due to increased outbreeding (though Dienekes suggests some of this effect may be due to more homozygous individuals living longer, my feeling is that the results associating homozygosity and lifespan are more likely to be artifacts due to increased outbreeding over time, rather than vice versa). A number of people have commented on a recent paper showing an increase in heterozygosity in human populations over time, presumably due to increased outbreeding (though Dienekes suggests some of this effect may be due to more homozygous individuals living longer, my feeling is that the results associating homozygosity and lifespan are more likely to be artifacts due to increased outbreeding over time, rather than vice versa). This is an interesting result, and seems plausible, but the figure in the paper is difficult to judge--I wondered why the authors chose not to show their actual data, but rather only the fitted regression line. The answer is that the data itself looks much less impressive than the pretty lines in the main text (see right). This isn't to say that the result isn't correct (I assume the authors made sure their results are robust to the few outliers in that plot), but the relationship between homozygosity and time is certainly more noisy than implied by the figure. Labels: Genetics

Monday, March 16, 2009

One of the most frustrating things about modern American models of ethnicity is that they are so focused on the racial aspect, and to a lesser extent on the white ethnics who arrived after 1840. Albion's Seed is great because it elucidates in such detail the different British strains which settled the Americas, but unfortunately it doesn't push the story beyond the colonial period. Other works of history hint at the fissures in Anglo-America, but few explore the divisions explicitly. The political ramifications of race, or the arrival of the Irish, are relatively prominent in the public consciousness, but I think it is arguable that the differences between the Puritans and Scots-Irish have had a more important effect on the trajectory of the American republic and our history. From page 50 of Clash of Extremes: The Economic Origins of the Civil War:

Northern-born settlers (and more particulary New Englanders) and Southern-born migrants had distinct work habits and their own approaches to entrepenurial activities. Michael Chevalier, a French official who came to America in the 1830s to study pblic works, remarked upon the differences: "In a village in Missouri, by the side of a house with broken windows, dirty in its outward appearance, around the door of which a parcel of ragged children are quarreling and fighting, you may see another, freshly painted, surrounded by a simle, but neat and nicely whitewashed fence, with a dozen of carefully trimmed trees about it, and through the windows in a small room shining with cleanliness you may espy some nicely combed little boys and some young girls dressed in almost the last Paris fashion. Both houses belong to farmers, but one of them is from North Carolina and the other from New England." This vignette is simply an illustration of scattered quantitative data you see in some of these works. In short New Englanders were wealthier, more well educated and more fertile than immigrants to the West from the South. Because of easier movement up the Mississippi-Ohio valley the original settlers in much of the Midwest were of Southern origin; but with the opening of the Erie canal and the rise of the Great Lakes economy fertile and industrious Yankees added much of the northern Midwest to Greater New England. Much of American history can easily be modeled as a clash of civilizations.

Sunday, March 15, 2009

Will the recession bring anti-globalization protests back?

posted by

agnostic @ 3/15/2009 03:05:00 AM

When I was a clueless sophomore and junior in college, 2000 - 2001, the cool thing that was sweeping through campuses was anti-globalization. It was more than just that, but this was the core. (There was also the Nader campaign, the Florida vote fiasco, Enron, and 9/11.) At the time I was incredibly far left (left anarchist) but drifted away from the movement around the spring of 2003, the last big protest being against the invasion of Iraq. I didn't have anything to do with it after that, and my views have moved to the center-right.

As this list of anti-globalization protests confirms, I wasn't unusual. The really large protests took place in 2000 and especially 2001, they were on the decline by 2003, and from 2004 through 2006, they were non-existent within the First World (aside from ritualistic May Day protests). There's a slight uptick in 2007, and now The Telegraph reports that London is preparing for the biggest protest in a decade. The umbrella group organizing the protest is G20 Meltdown. Maybe it's not surprising, but it looks like these things flare up during recessions and abate during booms. The first round took place during the dot-com crash, and by 2004, college students and 20-somethings were too busy applying their dopey open minds to the topics of metrosexual facial moisturizers, which regional real estate bubble they would exuberantly contribute to, and the crunk and post-punk revival music that was out -- way cooler than that Blink182 bullshit that was popular from about 1997 to 2002. But now that young people sense bad things ahead, we may be in for another deluge of protesting professors, fliers for International Socialist Organization meetings, and low-status young males lobbing rocks to impress the one cute anarchist chick at the protest. Labels: Economics, History, politics

Tuesday, March 10, 2009

The genetics of blue eye color in humans is almost entirely controlled by a single SNP in a conserved non-coding region in an intron of HERC2, as was strikingly demonstrated in a recent study on using genetics to predict eye pigmentation. The genetics of blue eye color in humans is almost entirely controlled by a single SNP in a conserved non-coding region in an intron of HERC2, as was strikingly demonstrated in a recent study on using genetics to predict eye pigmentation. Humans are not the only primate to have blue eyes--one notable example is the blue-eyed black lemur (pictured on the right). As it's well-known that convergent evolution in pigmentation has occurred in many taxa via similar genetic mechanisms (eg. MC1R), one obvious question is: have similar genetic changes led to blue eyes in humans and other primates? For blue-eyed lemurs, a new study demonstrates that, well, the answer is no. The authors sequence the region known to be causal for human blue eyes in both blue-eyed black lemurs and a closely-related, non-blue-eyed species, and find no differences. Though this is a negative result, it's still kind of fun, and establishes a nice example of convergent evolution via separate genetic mechanisms in primates.

Genetic Gating of Human Fear Learning and Extinction: Possible Implications for Gene-Environment Interaction in Anxiety Disorder:

Pavlovian fear conditioning is a widely used model of the acquisition and extinction of fear. Neural findings suggest that the amygdala is the core structure for fear acquisition, whereas prefrontal cortical areas are given pivotal roles in fear extinction. Forty-eight volunteers participated in a fear-conditioning experiment, which used fear potentiation of the startle reflex as the primary measure to investigate the effect of two genetic polymorphisms (5-HTTLPR and COMTval158met) on conditioning and extinction of fear. The 5-HTTLPR polymorphism, located in the serotonin transporter gene, is associated with amygdala reactivity and neuroticism, whereas the COMTval158met polymorphism, which is located in the gene coding for catechol-O-methyltransferase (COMT), a dopamine-degrading enzyme, affects prefrontal executive functions. Our results show that only carriers of the 5-HTTLPR s allele exhibited conditioned startle potentiation, whereas carriers of the COMT met/met genotype failed to extinguish conditioned fear. These results may have interesting implications for understanding gene-environment interactions in the development and treatment of anxiety disorders. Also see ScienceDaily. Here's the COMT SNP in SNPedia. Also, here it is in the HGDP browser. A/A is low activity variant. Related: Other posts on COMT. Labels: Behavior Genetics, Genetics

Advanced Paternal Age Is Associated with Impaired Neurocognitive Outcomes during Infancy and Childhood. I blogged it at ScienceBlogs.

Labels: Genetics

Monday, March 09, 2009

It's out. Will be interesting to compare with Religious Landscape Survey. Here's a headline from a summary: More Americans say they have no religion. H/T Secular Right.

Saturday, March 07, 2009

For non-blogging reasons I have recently been exploring the web for online primary texts in the history of biology. Since I last did much searching of this kind, many new resources have become available, and existing ones have been improved. For the benefit of any interested readers, here are some recommendations:

A good website on the history of genetics, with an emphasis on late 19th and early 20th century texts, is here. It includes the original papers of Gregor Mendel and some important works by August Weismann. For individual authors, Charles Darwin is of course well served. The 'Complete Works' site maintained by John Van Wyhe here gives access to all of Darwin's published work, many of the manuscript sources, and related works by other authors, such as contemporary reviews of Darwin's works. The full published correspondence from the Darwin Correspondence Project, which currently goes up to 1868, is available online about four years after print publication, For the period after 1868, the excellent Life and Letters and More Letters collections, edited by Darwin's son Francis, are already available. People sometimes complain that Alfred Russel Wallace is neglected in favour of Darwin, but so far as online resources are concerned, Wallace has an outstanding website created by Charles H. Smith here, containing a large proportion of Wallace's scattered and diverse works. I have often mentioned Gavan Tredoux's Francis Galton website before, but here it is again. The complete works of Louis Pasteur are available here (follow the external links). I don't personally care much for Herbert Spencer, but he is historically important, and many of his major works are available here. These do not currently include his Principles of Biology, but this is available here. Added: I just found a website for T. H. Huxley, here. I don't know of any other 19th century biologists with 'complete works' websites, but many important works are now available on resources such as Google Books or OpenLibrary. For example, most of the books of the great anatomists Georges Cuvier and Richard Owen are available. Going back a bit further in time, the works of Lamarck are available here, and those of Buffon here. These are the sources I thought worth mentioning. If readers have other recommendations, please put them in Comments.

Thursday, March 05, 2009

It has been noticed in some diseases that common variants which lead to modest increases in risk are located in or near genes that also, when mutated, cause severe monogenic forms of a the same disease (eg. obesity). This naturally leads to the hypothesis that newly identified genes containing modest risk alleles will also contain rarer alleles of strong effect.

A new study tests this hypothesis in type I diabetes: the authors take 10 genes known to be involved in diabetes etiology (note that many of these genes were discovered by genome-wide association studies of common variants) and re-sequence them in a large set of cases and controls. What do they find? As hypothesized, a number of rare protein-altering changes in one of the genes (IFIH1, a gene involved in response to viral infection) end up being strongly associated with type I diabetes. The effect sizes aren't massive (the risk alleles have odds ratios around 2), but they certainly have stronger effects than the common variants identified (though because of their low frequencies, they explain only a minimal fraction of all the variance in diabetes risk). This is only a proof-of-principle-- expect many similar studies, including full exome re-sequencing, in the years to come. Labels: Genetics

Via Dienekes, The Earliest Horse Harnessing and Milking:

Horse domestication revolutionized transport, communications, and warfare in prehistory, yet the identification of early domestication processes has been problematic. Here, we present three independent lines of evidence demonstrating domestication in the Eneolithic Botai Culture of Kazakhstan, dating to about 3500 B.C.E. Metrical analysis of horse metacarpals shows that Botai horses resemble Bronze Age domestic horses rather than Paleolithic wild horses from the same region. Pathological characteristics indicate that some Botai horses were bridled, perhaps ridden. Organic residue analysis, using 13C and D values of fatty acids, reveals processing of mare's milk and carcass products in ceramics, indicating a developed domestic economy encompassing secondary products. Related: The Horse, the Wheel, and Language: How Bronze-Age Riders from the Eurasian Steppes Shaped the Modern World and lactase persistence.

If you haven't read any of Peter Turchin's work, his presentation (video) at Beyond Belief is a reasonable precis.

Will information criteria replace p-values in common use? Some trends

posted by

agnostic @ 3/05/2009 12:30:00 AM

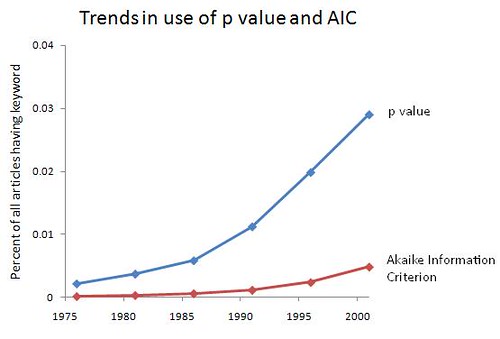

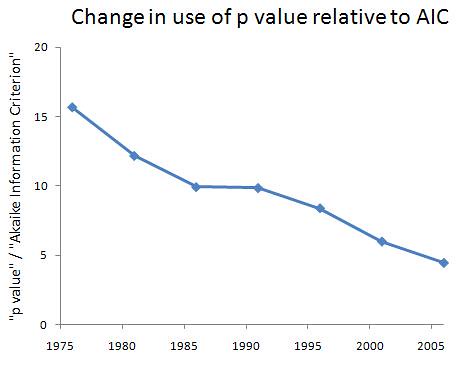

P-values come from null hypothesis testing, where you test how likely your observed data (and more extreme data) are under the assumption that the null hypothesis is true. As such, they do not allow us to decide which of a variety of hypotheses or models is true. The probability they encode refers to the observed data under an assumption -- it does not refer to the hypotheses on the table.

Using information criteria allows us to decide between a variety of hypotheses or models about how the world works. They formalize Occam's Razor by rewarding models that show a good fit to the observed data, while penalizing models that have lots of parameters to estimate (i.e., those that are more complex). Whichever one best balances this trade-off wins. Although I'm not a stats guy -- I'm much more at home cooking up models -- I've been told that the broader academic world is becoming increasingly hip to the idea of using information criteria, rather than insist on null hypothesis testing and reporting of p-values. So, let's see what JSTOR has to say. I did an advanced search of all articles for "p value" and for "Akaike Information Criterion" (the most popular one), looking at 5-year intervals just to save me some time and to smooth out the year-to-year variation. I start when the AIC is first mentioned. For the prevalence of each, I end in 2003, since there's typically a 5-year lag before articles end up in JSTOR, and estimating the prevalence requires a good guess about the population size. For the ratio of the size of one group to the other, I go up through 2008, since this ratio does not depend an accurate estimate of the total number of articles. From 2004 to 2008, there are 4132 articles with "p value" and 927 with "Akaike Infomration Criterion," so the estimate of the ratio isn't going to be bad even with fewer articles available during this time. Intervals are represented by their mid-point. Someone else can do the better job of searching year by year, perhaps restricting the search to social science journals to see if real headway is being made. (It would be uninteresting to see a rise of the popularity of information criteria in statistics journals.) Here are the trends in the use of each, as well as the ratio of p-value to AIC:   It's promising that both are increasing over the past 30-odd years, since that means more people are bothering to be quantitative. Still, less than 5% of articles mention p-values or information criteria -- some of that is due to the presence of arts and humanities journals, but there's still a big slice of the hard and soft sciences that needs to be converted. Also encouraging is the steady decline in the dominance of p-values to the AIC: they're still about 4.5 times as commonly used in academia at large, but that's down from about 15.5 times as common in the mid-1970s, a 71% decline. Graduate students and young professors -- the writing is on the wall. Aside from being intellectually superior, information criteria will give you a competitive edge in the job market, at least in the near future. After that, they will be required. Labels: academia, Modeling, Statistics

Wednesday, March 04, 2009

Tuesday, March 03, 2009

Mean # of children for each category:

Left of Center to Far Left 0.52 Right of Center 0.62 Libertarian 0.85 No Religion 0.56 Religious 0.99 Atheist, Agnostic & Skeptical - 0.58 Theist to aspiring Theist - 1.05 Some University Education or less - 0.67 University Degree - 0.48 Graduate Degree - 0.85 Raw data below the fold.

Monday, March 02, 2009

No surprise. But the data are rather stark. Excluding those who gave "No Answer" and "Lots" here are the mean number of children of readers by age group from the survey, with the mean number of children in the age groups from the GSS for whites in the parentheses:

18-25 = 0 (0.32) 26-35 = 0.25 (1.36) 36-45 = 0.93 (2.17) 46-65 = 1.02 (2.63) 65+ = 1.88 (2.65) I thought I would post this since The Inductivist is giving Greg props for his large family. I did exclude the one individual who said they had "lots" of children in the 18-25 age group. I wish Bryan Caplan the best of luck in evangelizing for reproduction, but it's going to be a tough sell. The raw numbers are below in a table.

Sunday, March 01, 2009

About His Deposit...:

Caroline Hall was supposed to sign the contract a month ago guaranteeing a kindergarten spot for her son at an Upper East Side private school. He had already spent two happy years attending its early-childhood program. Related, Privileged children excel, even at low-performing comprehensives: Middle-class parents obsessed with getting their children into the best schools may be wasting their time and money, academics say today. See The Nurture Assumption: Why Children Turn Out the Way They Do. Also this Bryan Caplan post: The bottom line for parents, as usual, is: Chill out. Your kid will probably do fine whatever you do. And even if he does badly, your parenting is unlikely to help. Reminder: these are behavior genetic insights. Parents presumably have more information about their individual child; i.e, how susceptible your offspring might be to retarded peer pressure. I had many friends with whom I played b-ball as a teenager who would laugh at the fact that I read books for fun and talked shit about it, but that has pretty much zero impact on me. The esteem of my fellow man has always been rather low on the pecking order of my values (this somewhat autistic tendency explains my youthful flirtation is hardcore libertarianism). Labels: Behavior Genetics

John Hawks points to an article by James F. Crow, Mayr, mathematics and the study of evolution. As John stated this is Open Access assuming you take the time to register. Here is a taste:

In 1959 Ernst Mayr...flung down the gauntlet...at the feet of the three great population geneticists RA Fisher, Sewall Wright and JBS Haldane..."But what, precisely," he said, "has been the contribution of this mathematical school to the evolutionary theory, if I may be permitted to ask such a provocative question?" His skepticism arose in part from the fact that the mathematical theory at the time had little to say about speciation, Mayr's major interest. But his criticism was more broadly addressed to the utility of the entire approach. A particular focus was the simplification that he called "beanbag genetics", in which "Evolutionary genetics was essentially presented as an input or output of genes, as the adding of certain beans to a beanbag and the withdrawing of others." Crow referred to some of these questions 3 years ago when I interviewed him. Though much of the essay is a restatement of ideas floated elsewhere, it's still awesome that Crow is publishing at the age of 92. Judging by how quickly he replied when I sent him an email he is also still actively corresponding. As for the general thesis outlined in the article, of course I tend to agree with Crow. From what I know Ernst Mayr's viewpoint in Systematics was overturned by the cladist revolution, which introduced a rigorous hypothetico-deductive framework into the field. It is perhaps just part of a trend of a marginalization of more philosophical biologists who rely on intuition in the realm of theory, and serves as a specific case study of Mayr's own philosophy of science and how it is ceding ground to more moral analytic techniques. Nevertheless, we can thank Mayr for his mentoring of someone like Robert Trivers. I remember talking to a friend of mine who was at OEB in the early 2000s, and she mentioned getting stuck in the elevator with Ernst Mayr, and my first reaction was, "Dude is still alive?!?!" Labels: Genetics, Philosophy of science |

|||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||