|

Thursday, January 21, 2010

What era are our intuitions about elites and business adapted to?

posted by

agnostic @ 1/21/2010 01:36:00 AM

Well, just the way I asked it, our gut feelings about the economically powerful are obviously not a product of hunter-gatherer life, given that such societies have minimal hierarchy, and so minimal disparities in power, material wealth, privileges of all kinds, etc. Hunter-gatherers don't even tolerate would-be elite-strivers, so beyond a blanket condemnation of trying to be a big-shot, they don't have the subtler attitudes that agricultural and industrial people do -- these latter groups tolerate and somewhat respect elites but resent and envy them at the same time.

So that leaves two major eras -- agricultural and industrial societies. I'm going to refer to these instead by terms that North, Wallis, & Weingast use in their excellent book Violence and Social Orders. Their framework for categorizing societies is based on how violence is controlled. In the primitive social order -- hunter-gatherer life -- there are no organizations that prevent violence, so homicide rates are the highest of all societies. At the next step up, limited-access social orders -- or "natural states" that sprung up with agriculture -- substantially reduce the level of violence by giving the violence specialists (strongmen, mafia dons, etc.) an incentive to not go to war all the time. Each strongman and his circle of cronies has a tacit agreement with the other strongmen -- who all make up a dominant coalition -- that I'll leave you to exploit the peasants living on your land if you leave me to exploit the peasants on my land. This way, the strongman doesn't have to work very much to live a comfortable life -- just steal what he wants from the peasants on his land, and protect them should violence break out. Why won't one strongman just raid another to get his land, peasants, food, and women? Because if this type of civil war breaks out, everyone's land gets ravaged, everyone's peasants can't produce much food, and so every strongman will lose their easy source of free goodies (rents). The members of the dominant coalition also agree to limit access to their circle, to limit people's ability to form organizations, etc. If they let anybody join their group, or form a rival coalition, their slice of the pie would shrink. And this is a Malthusian economy, so the pie isn't going to get much bigger within their lifetimes. So by restricting (though not closing off) access to the dominant coalition, each member maintains a pretty enjoyable size of the rents that they extract from the peasants. Why wouldn't those outside the dominant coalition not try to form their own rival group anyway? Because the strongmen of the area are already part of the dominant coalition -- only the relative wimps could try to stage a rebellion, and the strongmen would immediately and violently crush such an uprising. It's not that one faction of the coalition will never raid another, just that this will be rare and only when the target faction has lost some of its share in the balance of power -- maybe they had 5 strongmen but now only 1. Obviously the other factions aren't going to let that 1 strongman enjoy the rents that 5 were before, while they enjoy average rents -- they're going to raid him and take enough so that he's left with what seems his fair share. Aside from these rare instances, there will be a pretty stable peace. There may be opportunistic violence among peasants, like one drunk killing another in a tavern, but nothing like getting caught in a civil war. And they certainly won't be subject to the constant threat of being killed and their land burned in a pre-dawn raid by the neighboring tribe, as they would face in a stateless hunter-gatherer society. As a result, homicide rates are much lower in these natural states than in stateless societies. Above natural states are open-access orders, which characterize societies that have market economies and competitive politics. Here access to the elite is open to anyone who can prove themselves worthy -- it is not artificially restricted in order to preserve large rents for the incumbents. The pie can be made bigger with more people at the top, since you only get to the top in such societies by making and selling things that people want. Elite members compete against each other based on the quality and price of the goods and services they sell -- it's a mercantile elite -- rather than based on who is better at violence than the others. If the elites are flabby, upstarts can readily form their own organizations -- as opposed to not having the freedom to do so -- that, if better, will dethrone the incumbents. Since violence is no longer part of elite competition, homicide rates are the lowest of all types of societies. OK, now let's take a look at just two innate views that most people have about how the business world works or what economic elites are like, and see how these are adaptations to natural states rather than to the very new open-access orders (which have only existed in Western Europe since about 1850 or so). One is the conviction, common even among many businessmen, that market share matters more than making profits -- that being more popular trumps being more profitable. The other is most people's mistrust of companies that dominate their entire industry, like Microsoft in computers. First, the view that capturing more of the audience -- whether measured by the portion of all sales dollars that head your way or the portion of all consumers who come to you -- matters more than increasing revenues and decreasing costs -- boosting profits -- remains incredibly common. Thus we always hear about how a start-up must offer their stuff for free or nearly free in order to attract the largest crowd, and once they've got them locked in, make money off of them somehow -- by charging them later on, by selling the audience to advertisers, etc. This thinking was widespread during the dot-com bubble, and there was a neat management-oriented book written about it called The Myth of Market Share. Of course, that hasn't gone away since then, as everyone says that "providers of online content" can never charge their consumers. The business model must be to give away something cool for free, attract a huge group of followers, and sell this audience to advertisers. (I don't think most people believe that charging a subset for "premium content" is going to make them rich.) For example, here is Felix Salmon's reaction to the NYT's official statement that they're going to start charging for website access starting in 2011: Successful media companies go after audience first, and then watch revenues follow; failing ones alienate their audience in an attempt to maximize short-term revenues. Wrong. YouTube is the most popular provider of free media, but they haven't made jackshit four years after their founding. Ditto Wikipedia. The Wall Street Journal and Financial Times websites charge, and they're incredibly profitable -- and popular too (the WSJ has the highest newspaper circulation in the US, ousting USA Today). There is no such thing as "go after audiences" -- they must do that in a way that's profitable, not just in a way that makes them popular. If you could "watch revenues follow" by merely going after an audience, everyone would be billionaires. The NYT here seems to be voluntarily giving up on all its readers outside the US, who can’t be reasonably expected to have the ability or inclination to pay for web access. It had the opportunity to be a global newspaper, leveraging both the NYT and the IHT brands, and has now thrown that away for the sake of short-term revenues. This sums up the pre-industrial mindset perfectly: who cares about getting paid more and spending less, when what truly matters is owning a brand that is popular, influential, and celebrated and sucked up to? In a natural state, that is the non-violent path to success because you can only become a member of the dominant coalition by knowing the right in-members. They will require you to have a certain amount of influence, prestige, power, etc., in order to let you move up in rank. It doesn't matter if you nearly bankrupt yourself in the process of navigating these personalized patron-client networks because once you become popular and influential enough, you stand a good chance of being allowed into the dominant coalition and then coasting on rents for the rest of your life. Clearly that doesn't work in an open-access, competitive market economy where interactions are impersonal rather than crony-like. If you are popular and influential while paying no attention to costs and revenues, guess what -- there are more profit-focused competitors who can form rival companies and bulldoze over you right away. Again look at how spectacularly the WSJ has kicked the NYT's ass, not just in crude terms of circulation and dollars but also in terms of the quality of their website. They broadcast twice-daily video news summaries and a host of other briefer videos, offer thriving online forums, and on and on. Again, in the open-access societies, those who achieve elite status do so by competing on the margins of quality and price of their products. You deliver high-quality stuff at a low price while keeping your costs down, and you scoop up a large share of the market and obtain prestige and influence -- not the other way around. In fairness, not many practicing businessmen fall into this pre-industrial mindset because they won't be practicing for very long, just as businessmen who cried for a complete end to free trade would go under. It's mostly cultural commentators who preach the myth of market share, going with what their natural-state-adapted brain reflexively believes. Next, take the case of how much we fear companies that comes to dominate their industry. People freak out because they think the giant, having wiped out the competitors, will enjoy a carte blanche to exploit them in all sorts of ways -- higher prices, lower output, shoddier quality, etc. We demand the protector of the people to step in and do something about it -- bust them up, tie them down, resurrect their dead competitors, just something! That attitude is thoroughly irrational in an open-access society. Typically, the way you get that big is that you provided customers with stuff that they wanted at a low price and high quality. If you tried to sell people junk that they didn't want at a high price and terrible quality, guess how much of the market you will end up commanding. That's correct: zero. And even if such a company grew complacent and inertia set in, there's nothing to worry about in an open-access society because anyone is free to form their own rival organization to drive the sluggish incumbent out. The video game industry provides a clear example. Atari dominated the home system market in the late '70s and early '80s but couldn't adapt to changing tastes -- and were completely destroyed by newcomer Nintendo. But even Nintendo couldn't adapt to the changing tastes of the mid-'90s and early 2000s -- and were summarily dethroned by newcomer Sony. Of course, inertia set in at Sony and they have recently been displaced by -- Nintendo! It doesn't even have to be a newcomer, just someone who knows what people want and how to get it to them at a low price. There was no government intervention necessary to bust up Atari in the mid-'80s or Nintendo in the mid-90s or Sony in the mid-2000s. The open and competitive market process took care of everything. But think back to life in a natural state. If one faction obtained complete control over the dominant coalition, the ever so important balance of power would be lost. You the peasant would still be just as exploited as before -- same amount of food taken -- but now you're getting nothing in return. At least before, you got protection just in case the strongmen from other factions dared to invade your own master's land. Now that master serves no protective purpose. Before, you could construe the relationship as at least somewhat fair -- he benefited you and you benefited him. Now you're entirely his slave; or equivalently, he is no longer a partial but a 100% parasite. You can understand why minds that are adapted to natural states would find market domination by a single or even small handful of firms ominous. It is not possible to vote with your dollars and instantly boot out the market-dominator, so some other Really Strong Group must act on your behalf to do so. Why, the government is just such a group! Normal people will demand that vanquished competitors be restored, not out of compassion for those who they feel were unfairly driven out -- the public shed no tears for Netscape during the Microsoft antitrust trial -- but in order to restore a balance of power. That notion -- the healthy effect for us normal people of there being a balance of power -- is only appropriate to natural states, where one faction checks another, not to open-access societies where one firm can typically only drive another out of business by serving us better. By the way, this shows that the public choice view of antitrust law is wrong. The facts are that antitrust law in practice goes after harmless and beneficial giants, hamstringing their ability to serve consumers. There is little to no evidence that such beatdowns have boosted output that had been falling, lowered prices that had been rising, or improved quality that had been eroding. Typically the lawsuits are brought by the loser businesses who lost fair and square, and obviously the antitrust bureaucrats enjoy full employment by regularly going after businesses. But we live in a society with competitive politics and free elections. If voters truly did not approve of antitrust practices that beat up on corporate giants, we wouldn't see it -- the offenders would be driven out of office. And why is it that only one group of special interests gets the full support of bureaucrats -- that is, the loser businesses have influence with the government, while the winner business gets no respect. How can a marginal special interest group overpower an industry giant? It must be that all this is allowed to go on because voters approve of and even demand that these things happen -- we don't want Microsoft to grow too big or they will enslave us! This is a special case of what Bryan Caplan writes about in The Myth of the Rational Voter: where special interests succeed in buying off the government, it is only in areas where the public truly supports the special interests. For example, the public is largely in favor of steel tariffs if the American steel industry is suffering -- hey, we gotta help our brothers out! They are also in favor of subsidies to agribusiness -- if we didn't subsidize them, they couldn't provide us with any food! And those subsidies are popular even in states where farming is minimal. So, such policies are not the result of special interests hijacking the government and ramrodding through policies that citizens don't really want. In reality, it is just the ignorant public getting what it asked for. It seems useful when we look at the systematic biases that people have regarding economics and politics to bear in mind what political and economic life was like in the natural state stage of our history. Modern economics does not tell us about that environment but instead about the open-access environment. (Actually, there's a decent trace of it in Adam Smith's Theory of Moral Sentiments, which mentions cabals and factions almost as much as Machiavelli -- and he meant real factions, ones that would war against each other, not the domesticated parties we have today.) We obviously are not adapted to hunter-gatherer existence in these domains -- we would cut down the status-seekers or cast them out right away, rather than tolerate them and even work for them. At the same time, we evidently haven't had enough generations to adapt to markets and governments that are both open and competitive. That is certain to pull our intuitions in certain directions, particularly toward a distrust of market-dominating firms and toward advising businesses to pursue popularity and influence more than profitability, although I'm sure I could list others if I thought about it longer. Labels: Economic History, Economics, Evolutionary Psychology, History, politics, Psychology

Tuesday, January 12, 2010

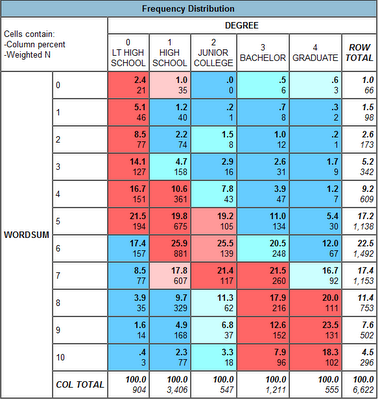

Frequent Cognitive Activity Compensates for Education Differences in Episodic Memory:

Results: The two cognitive measures were regressed on education, cognitive activity frequency, and their interaction, while controlling for the covariates. Education and cognitive activity were significantly correlated with both cognitive abilities. The interaction of education and cognitive activity was significant for episodic memory but not for executive functioning. Here's some survey data on books from a few years ago, One in Four Read No Books Last Year: One in four adults read no books at all in the past year, according to an Associated Press-Ipsos poll released Tuesday. Of those who did read, women and older people were most avid, and religious works and popular fiction were the top choices. Here's the GSS when it comes to comparing educational attainment & WORDSUM, limiting the sample to 1998 and after:  I assume readers of this weblog are more familiar with the dull who have educational qualifications, but it is important to note that there are a non-trivial number of brights who for whatever reason didn't go on to obtain higher education (though this is more true of older age cohorts in the United States). Labels: Psychology

Tuesday, December 29, 2009

Why do we delay gratification even when there is no downside?

posted by

agnostic @ 12/29/2009 09:10:00 PM

Earlier this year, John Tierney reviewed several studies on how delaying gratification makes us feel better in the short term by preventing guilt but makes us feel more miserable in the long term by causing regret over missed opportunities. I added my two cents here, just to note that this sounds like part of the Greg Clark story about recent genetic change in the commercial races that adapted them to the emerging mercantile societies they found themselves in. What I had in mind was the delaying of vice -- investing a dollar today rather than splurging, moderating the amount of drink or sweets you enjoy, and so on.

But now Tierney has another review of related studies which show that we delay gratification even for what should be guilt-free pleasures like redeeming a gift card, using frequent flier miles, and visiting the landmarks in your local area. And don't we all have enjoyable books and DVDs we've been putting off? After indulging in these cases, there is no potential bankruptcy, no hangover, and no tooth decay -- so why do we indiscriminately lump them in with genuine vices and put off indulging in them? Obviously this tendency too is a feature of agrarian or industrial groups -- hunter-gatherers would never leave gift cards lying around in their drawers. It must be because of how recent the change toward delaying gratification has been. Given enough time, we might evolve a specialized module for delaying gratification in vices and another module for doing so in guilt-free pleasures, which would be better than where we are now. But when our genetic response to a change is abrupt, typically we have broad-brush solutions that take care of the intended target but also leave plenty of collateral damage. Over time our solutions get smarter, but it takes awhile. Just look at how crude the responses to malaria are. We see this domain-general taste for (or aversion of) risk in other areas. People who lead more risky lifestyles buy much less insurance than people who lead cautious lifestyles. Those who ride motorcycles without helmets would be richer and more likely to pass on their genes if they bought a lot of insurance, while those who play it safe would be richer by not buying all that superfluous insurance. Instead, daredevils are daredevils all the way -- including a contempt for insurance. This casts doubt on how easy it is to change our behavior so that we no longer postpone our indulgence in guilt-free pleasures. Because we have a domain-general delay of gratification, it will still just feel wrong. You can also argue the logic of buying lots of insurance to the motorcyclist who rides without a helmet, but that won't change his mind because his tastes for risk is across-the-board. Labels: Behavioral Economics, Psychology

Thursday, December 03, 2009

David Killoren points me to this Ed Yong post, Creating God in one's own image. It is based on the paper Believers' estimates of God's beliefs are more egocentric than estimates of other people's beliefs:

People often reason egocentrically about others' beliefs, using their own beliefs as an inductive guide. Correlational, experimental, and neuroimaging evidence suggests that people may be even more egocentric when reasoning about a religious agent's beliefs (e.g., God). In both nationally representative and more local samples, people's own beliefs on important social and ethical issues were consistently correlated more strongly with estimates of God's beliefs than with estimates of other people's beliefs (Studies 1–4). Manipulating people's beliefs similarly influenced estimates of God's beliefs but did not as consistently influence estimates of other people's beliefs (Studies 5 and 6). A final neuroimaging study demonstrated a clear convergence in neural activity when reasoning about one's own beliefs and God's beliefs, but clear divergences when reasoning about another person's beliefs (Study 7). In particular, reasoning about God's beliefs activated areas associated with self-referential thinking more so than did reasoning about another person's beliefs. Believers commonly use inferences about God's beliefs as a moral compass, but that compass appears especially dependent on one's own existing beliefs. Ed hits the main points well as usual, so let me jump to the discussion: these data provide insight into the sources of people's own religious beliefs. Although people obviously acquire religious beliefs from a variety of external sources, from parents to broader cultural influences, these data suggest that the self may serve as an important source of religious beliefs as well. Not only are believers likely to acquire the beliefs and theology of others around them, but may also seek out believers and theologies that share their own personal beliefs. If people seek out religious communities that match their own personal views on major social, moral, or political issues, then the information coming from religious sources is likely to further validate and strengthen their own personal convictions and values. Religious belief has generally been treated as a process of socialization whereby people's personal beliefs about God come to reflect what they learn from those around them, but these data suggest that the inverse causal process may be important as well: people's personal beliefs may guide their own religious beliefs and the religious communities they seek to be part of. There is always debate about how religion affects cognition and culture, and how cognition and culture affects religion. I suspect that in religious environments the default stance is that religion affects cognition and culture. Religion is after all assumed to be true, a reflection of some transcendent reality. It stands to reason that its impact upon humans would be significant if you believe that it is an expression of the ultimate reality (if you are a person to whom "ultimate reality" means something, you know what I mean, though I don't really myself). But many atheists hold to the same view. The New Atheists often put at religion's feet all the evil done in its name (though generally minimizing power of religion as a force for altruistic action or social cohesion). This view seems to hold that religion is something clear and distinct. More generally in civilized societies religion is a matter of rational and systematic reflection, detailed practice, and mindful contemplation. On the other hand, there are those who emphasize how religion reflects social and cognitive presuppositions. For example, most American Christians would assert that their religion naturally leans toward an anti-racist perspective. This would not be something recognizable to R. L. Dabney. Consider the arguments of Susan Wise Bauer, a Reformed Christian historian, on the stance of many Christian Southerners to slavery. But in other writings she cites Dabney, who is still apparently influential among conservative Presbyterians (some have even attempted to defend slavery because it is Biblical, but to my knowledge very few conservative Christians will follow along here, instead relying in interpretations such as Bauer's). Even "conservative" and "orthodox" and "traditional" Christians seem quite clearly influenced by the distribution of norms around them. Similarly, I recall several years ago finding rather interesting the arguments of Indian Christians on why arranged marriage is Biblically preferred to love matches, with citations of specific instances in the Bible (consider the marriage of Isaac and Rebecca). Over the last few decades cognitive anthropologists who study religion have described models and reported results which show how religious phenomena, the bundle of traits which we bracket into religion, emerge from normal human psychological and social dynamics. Some scholars have even shown that the mental model of gods across cultures is actually invariant, though the verbal descriptions are very distinct. If you consider the power of culture to change religion, the shift from pacifist to non-pacifist stance among early Christians, or pro-racist to anti-racist stance among 20th century Christians, becomes more intelligible. I tend toward the second model in terms of its utility in what can be gleaned about human social processes. If, for example, I read the Hebrew Bible and the New Testament would I be able to predict which group was the more pacific, American Jews or white Evangelicals? I don't think so. Scott Atran reported in In Gods We Trust that religious believers showed little correlation above expectation in the inferences they made about correct behavior in specific situations in relation to their avowed religious beliefs. In other words, when people couldn't talk to each other and reach a religiously correct consensus, they simply gave a random range of answers. Both the Chinese Muslims and "Hidden Christians" of Japan moved in strange directions in relation to their co-religionists due to isolation. It seems plausible that to a great extent Emile Durkheim was right, religion is a reflection of society. But, it is also strongly constrained by human cognitive biases. The connection between some of these ideas and what Ed Yong asserts is obvious: Epley's results are sure to spark controversy, but their most important lesson is that relying on a deity to guide one's decisions and judgments is little more than spiritual sockpuppetry. David Killoren has an alternative model: I can think of at least one other plausible interpretation of this study. Killoren has an analogy clarifying what he's trying to get at: An analogy can help here. Suppose I think that Dr. Smith, a famous scientist, knows everything there is to know about biology. Then, if I believe that platypuses are not mammals, I should believe that Dr. Smith believes that platypuses are not mammals. (After all, if I believed that Dr. Smith considers platypuses to be mammals, and believed that Dr. Smith knows everything about biology, then I would be crazy to think platypuses are not mammals.) But this doesn't mean Dr. Smith is my sockpuppet. If Dr. Smith were to tell me that platypuses are mammals, I’d believe him, even if I previously thought otherwise. I can see where Killoren is coming from. When I was more deeply interested in philosophy of religion, and to a great extent thought religion was mostly about belief systems, I would probably be willing to go along with it. But at this point I think Ed Yong's thesis is more plausible because it is simple and dumb, and most people are simple and dumb. If you need to use an analogy that suggests that some cognitive cycles are being eaten up here, and I believe most moral cognition which has a religious tinge is actually "hard and fast" and more reflexive than this. Of course, in Tim Harford's The Logic of Life he shows that in the aggregate human behavior can quite often operate in a logical fashion as if it is undergirded by a chain of clean propositions derived from axioms. But I wasn't quite convinced by Harford's apologia; I'm still with Dan Ariely. Labels: Psychology, Religion

Wednesday, November 25, 2009

No support for birth order effects on personality from the GSS

posted by

ben g @ 11/25/2009 08:47:00 PM

In researching for a review of The Nurture Assumption, I read over the debate between Harris and Sulloway over birth order effects on personality. Sulloway's thesis, explained in Born to Rebel, is that last-born children have more rebellious, agreeable, and open-minded/liberal personalities, and that this manifests itself in history with revolutions spearheaded by last-borns. This runs in contrast to Harris's theory that the family environment has no lasting impact on personality, so she spends a good deal of time in her books and articles critiquing it.

The whole debate makes my head dizzy. A seemingly simple empirical question has produced years of arguing over methodology. I'm not going to go over the tedious back and forth here, except to say that you can see what both sides have to say with a Google search. Large, controlled studies have not been kind to Sulloway's thesis. Freese, Powell, and Steelman (1999) looked for a relationship between birth order (controlled for family size) and a variety of political measures on the nationally representative General Social Survey (GSS). They found no significant associations, contrary to Sulloway's predictions. I decided to look at the GSS myself, this time to see whether questions that tapped into personality characteristics outside of politics showed any relationship with birth order (SIBORDER), when sibship size (SIBS) was controlled for. I excluded only children. I used the Multiple Regressions feature on the Berkeley SDA tool. I found no significant associations between birth order and any of the four variables I looked at:

Labels: Psychology

Tuesday, November 17, 2009

John Derbyshire has review of The Faith Instinct up. He hits the major points well. I should elaborate on something. In Darwin's Cathedral David Sloan Wilson outlines two dimensions of religion, the horizontal and the vertical. The vertical is pretty straightforward, supernatural agents and forces. The cognition of religious ideas. The horizontal is the communitarian aspect of religion which sociologists such as Emile Durkheim focused on. That is, religion's functional role in society. The two are somewhat related of course, but I think it's a neat division which is useful.

I think the vertical aspect probably is a byproduct of cognitive biases we have. In other words, pleiotropy, whereby selection for agency detection, social intelligence, and innate theories of how the world works (folk biology and physics), generate intuitions which we bracket in the category "supernatural" as a response (this ranges from animism to astrology to theism). In contrast, I can see quite clearly how the horizontal aspect can foster group-level success, and so might be a target of selection. But, I don't necessarily think that it is really religion as such which is the target of selection; instead, they are collective and communal impulses. They may be channeled in a religious manner, but clearly can manifest in other ways. This is why I think organized religion, which is hooked into the horizontal dimension, seems to be collapsing more than "spirituality" in many nations. Many of the intuitions which generate religious impulse are strongly biologically specified, so will persist even after indoctrination ceases. By contrast I suspect that the collective and ritualistic impulses can manifest in ways we perceive as secular. Of course, this last point might be a matter of semantics, as evident by the term "political religion". Labels: Psychology, Religion

Monday, November 16, 2009

A simple framework for thinking about cultural generations

posted by

agnostic @ 11/16/2009 12:44:00 AM

In this discussion about pop music at Steve Sailer's, the topic of generations came up, and it's one where few of the people who talk about it have a good grasp of how things work. For example, the Wikipedia entry on generation notes that cultural generations only showed up with industrialization and modernization -- true -- but doesn't offer a good explanation for why. Also, they don't distinguish between loudmouth generations and silent generations, which alternate over time. As long as a cohort "shares a culture," they're considered a generation, but that misses most of the dynamics of generation-generation. My view of it is pretty straightforward.

First, we have to notice that some cohorts are full-fledged Generations with ID badges like Baby Boomer or Gen X, and some cohorts are not as cohesive and stay more out of the spotlight. Actually, one of these invisible cohorts did get an ID badge -- the Silent Generation -- so I'll refer to them as loudmouth generations (e.g., Baby Boomers, Gen X, and before long the Millennials) and silent generations (e.g., the small cohort cramped between Boomers and X-ers). Then we ask why do the loudmouth generations band together so tightly, and why do they show such strong affiliation with the generation that they continue to talk and dress the way they did as teenagers or college students even after they've hit 40 years old? Well, why does any group of young people band together? -- because social circumstances look dire enough that the world seems to be going to hell, so you have to stick together to help each other out. It's as if an enemy army invaded and you had to form a makeshift army of your own. That is the point of ethnic membership badges like hairstyle, slang, clothing, musical preferences, etc. -- to show that you're sticking with the tribe in desperate times. That's why teenagers' clothing has logos visible from down the hall, why they spend half their free time digging into a certain music niche, and why they're hyper-sensitive about what hairstyle they have. Adolescence is a socially desperate time, not unlike a jungle, in contrast to the more independent situation you enjoy during full adulthood. Being caught in more desperate circumstances, teenagers freak out about being part of -- fitting in with -- a group that can protect them; they spend the other half of their free time communicating with their friends. Independent adults have fewer friends, keep in contact with them much less frequently, and don't wear clothes with logos or the cover art from their favorite new album. OK, so that happens with every cohort -- why does this process leave a longer-lasting impact on the loudmouth cohorts? It is the same cause, only writ large: there's some kind of social panic, or over-turning of the status quo, that's spreading throughout the entire culture. So they not only face the trials that every teenager does, but they've also got to protect themselves against this much greater source of disorder. They have to form even stronger bonds, and display their respect for their generation much longer, than cohorts who don't face a larger breakdown of security. Now, where this larger chaos comes from, I'm not saying. I'm just treating it as exogenous for now, as though people who lived along the waterfront would go through periods of low need for banding together (when the ocean behaved itself) and high need to band together (when a flood regularly swept over them). The generation forged in this chaos participates in it, but it got started somewhere else. The key is that this sudden disorder forces them to answer "which side are you on?" During social-cultural peacetime, there is no Us vs. Them, so cohorts who came of age in such a period won't see generations in black-and-white, do-or-die terms. Cohorts who come of age during disorder must make a bold and public commitment to one side or the other. You can tell when such a large-scale chaos breaks out because there is always a push to reverse "stereotypical gender roles," as well as a surge of identity politics. The intensity with which they display their group membership badges and groupthink is perfectly rational -- when there's a great disorder and you have to stick together, the slightest falter in signaling your membership could make them think that you're a traitor. Indeed, notice how the loudmouth generations can meaningfully use the phrase "traitor to my generation," while silent generations wouldn't know what you were talking about -- you mean you don't still think The Ramones is the best band ever? Well, OK, maybe you're right. But substitute with "I've always thought The Beatles were over-rated," and watch your peers with torches and pitchforks crowd around you. By the way, why did cultural generations only show up in the mid-to-late 19th C. after industrialization? Quite simply, the ability to form organizations of all kinds was restricted before then. Only after transitioning from what North, Wallis, and Weingast (in Violence and Social Orders -- working paper here) call a limited access order -- or a "natural state" -- to an open access order, do we see people free to form whatever political, economic, religious, and cultural organizations that they want. In a natural state, forming organizations at will threatens the stability of the dominant coalition -- how do they know that your bowling league isn't simply a way for an opposition party to meet and plan? Or even if it didn't start out that way, you could well get to talking about your interests after awhile. Clearly young people need open access to all sorts of organizations in order to cohere into a loudmouth generation. They need regular hang-outs. Such places couldn't be formed at will within a natural state. Moreover, a large cohort of young people banding together and demanding that society "hear the voice of a new generation" would have been summarily squashed by the dominant coalition of a natural state. It would have been seen as just another "faction" that threatened the delicate balance of power that held among the various groups within the elite. Once businessmen are free to operate places that cater to young people as hang-outs, and once people are free to form any interest group they want, then you get generations. Finally, on a practical level, how do you lump people into the proper generational boxes? This is the good thing about theory -- it guides you in practice. All we have to do is get the loudmouth generations' borders right; in between them go the various silent or invisible generations. The catalyzing event is a generalized social disorder, so we just look at the big picture and pick a peak year plus maybe 2 years on either side. You can adjust the length of the panic, but there seems to be a 2-year lead-up stage, a peak year, and then a 2-year winding-down stage. Then ask, whose minds would have been struck by this disorder? Well, "young people," and I go with 15 to 24, although again this isn't precise. Before 15, you're still getting used to social life, so you may feel the impact a little, but it's not intense. And after 24, you're on the path to independence, you're not texting your friends all day long, and you've stopped wearing logo clothing. The personality trait Openness to Experience rises during the teenage years, peaks in the early 20s, and declines after; so there's that basis. Plus the likelihood to commit crime -- another measure of reacting to social desperation -- is highest between 15 and 24. So, just work your way backwards by taking the oldest age (24) and subtracting it from the first year of the chaos, and then taking the youngest age (15) and subtracting it from the last year of the chaos. "Ground zero" for that generation is the chaos' peak year minus 20 years. As an example, the disorder of the Sixties lasted from roughly 1967 to 1972. Applying the above algorithm, we predict a loudmouth generation born between 1943 and 1957: Baby Boomers. Then there was the early '90s panic that began in 1989 and lasted through 1993 -- L.A. riots, third wave feminism, etc. We predict a loudmouth generation born between 1965 and 1978: Generation X. There was no large-scale social chaos between those two, so that leaves a silent generation born between 1958 and 1964. Again, they don't wear name-tags, but I call them the disco-punk generation based on what they were listening to when they were coming of age. Going farther back, what about those who came of age during the topsy-turvy times of the Roaring Twenties? The mania lasted from roughly 1923 to 1927, forming a loudmouth generation born between 1899 and 1912. This closely corresponds to what academics call the Interbellum Generation. The next big disruption was of course WWII, which in America really struck between 1941 and 1945, creating a loudmouth generation born between 1917 and 1930. This would be the young people who were part of The Greatest Generation. That leaves a silent generation born between 1913 and 1916 -- don't know if anyone can corroborate their existence or not. That also leaves The Silent Generation proper, born between 1931 and 1942. Looking forward, it appears that these large social disruptions recur with a period of about 25 years on average. The last peak was 1991, so I predict another one will strike in 2016, although with 5 years' error on both sides. Let's say it arrives on schedule and has a typical 2-year build-up and 2-year winding-down. That would create a loudmouth generation born between 1990 and 2003 -- that is, the Millennials. They're already out there; they just haven't hatched yet. And that would also leave a silent generation born between 1979 and 1989. My sense is that Millennials are already starting to cohere, and that 1987 is more like their first year, making the silent generation born between 1979 and 1986 (full disclosure: I belong to it). So this method surely isn't perfect, but it's pretty useful. It highlights the importance of looking at the world with some kind of framework -- otherwise we'd simply be cataloguing one damn generation after another. Labels: culture, History, Psychology, Sociology

Monday, October 26, 2009

FuturePundit observes a phenomenon which might open up a possible avenue for nudge:

Clean rooms also increased willingness to volunteer and donate to charity. Labels: Psychology

Tuesday, September 08, 2009

The last part of this discussion between Felix Salmon & James Kwak is about wine (most if it is about finance), in particular, the fact that people subjectively seem to gain more utility out of expensive wines than cheap ones, even though most blind taste tests show little correlation between price and preference. Paul Bloom is apparently writing his next book on the topic of this sort of subjective hedonic experience, whereby your knowledge of context shapes and filters your perception. I used to think it wasthese sorts of subjective hedonic experiences were best not had, but now I'm not sure so. Is this just signalling? I don't think so since this often operates on a personal scale relative to food consumed alone. Can we train ourselves not to manifest these cognitive ticks? Consider an extremely tasty brownie shaped like feces vs. one that wasn't. Could you train yourself not to be affected by the feces shape because you know that qualitatively there isn't any difference? I assume most people could make themselves eat the feces shaped brownie, my question is could their rational and conscious understanding that it tastes the same as the one not shaped like feces reshape their perception of the experience so that they are of equal quality? It seems the price or knowledge of the provenance of a wine is much less "hard-wired" in our brains than aversion to feces, so perhaps we could appreciate cheap consumables so long as they didn't trigger aversive reflexes.

Addendum: I'm sure there's a technical word for what I'm talking about, but which I labelled subjective hedonism, so feel free to tell me in the comments. Labels: Psychology

Monday, August 24, 2009

U.S. Antidepressant Use Doubled in A Decade. I'm not against the usage of medicine, but I'm skeptical that this increase is really attributable to chronically depressed people with serious neurochemical imbalances getting treated. Rather, a substantial number of people whose lives "suck" at a given moment convince doctors to prescribe these medications. I'm willing to be corrected by data on the usage patterns, but that's just my limited personal experience with a range of people who get on these drugs in response to general suckiness, and the smaller number of people with modestly bizarre personality shifts makes me wonder as to more "modest" side effects which are not gossip-worthy.

Labels: Psychology

Tuesday, August 04, 2009

Altered connections on the road to psychopathy:

... Earlier studies suggested that dysfunction of the amygdala and/or orbitofrontal cortex (OFC) may underpin psychopathy. Nobody, however, has ever studied the white matter connections (such as the uncinate fasciculus (UF)) linking these structures in psychopaths. Therefore, we used in vivo diffusion tensor magnetic resonance imaging (DT-MRI) tractography to analyse the microstructural integrity of the UF in psychopaths (defined by a Psychopathy Checklist Revised (PCL-R) score of 25) with convictions that included attempted murder, manslaughter, multiple rape with strangulation and false imprisonment. We report significantly reduced fractional anisotropy (FA) (P<0.003), an indirect measure of microstructural integrity, in the UF of psychopaths compared with age- and IQ-matched controls. We also found, within psychopaths, a correlation between measures of antisocial behaviour and anatomical differences in the UF. To confirm that these findings were specific to the limbic amygdala–OFC network, we also studied two 'non-limbic' control tracts connecting the posterior visual and auditory areas to the amygdala and the OFC, and found no significant between-group differences. Lastly, to determine that our findings in UF could not be totally explained by non-specific confounds, we carried out a post hoc comparison with a psychiatric control group with a past history of drug abuse and institutionalization. Our findings remained significant. Taken together, these results suggest that abnormalities in a specific amygdala–OFC limbic network underpin the neurobiological basis of psychopathy. I'm a little skeptical about psychiatry's ability to diagnose distinctive phenotypes in general, but from what I have read genuinely amoral psychopaths are a real phenomenon, and not a politicized constructed pathology. Readers with more neuroscience chops are invited to weight in if this another sexy neuro paper with little substance. Also see ScienceDaily. Labels: Genetics, Psychology

Wednesday, July 15, 2009

Randall points me to a paper, Brain Regions for Perceiving and Reasoning About Other People in School-Aged Children:

Neuroimaging studies with adults have identified cortical regions recruited when people think about other people's thoughts (theory of mind): temporo-parietal junction, posterior cingulate, and medial prefrontal cortex. These same regions were recruited in 13 children aged 6–11 years when they listened to sections of a story describing a character's thoughts compared to sections of the same story that described the physical context. A distinct region in the posterior superior temporal sulcus was implicated in the perception of biological motion. Change in response selectivity with age was observed in just one region. The right temporo–parietal junction was recruited equally for mental and physical facts about people in younger children, but only for mental facts in older children. The sample included 7 girls and 6 boys. No mention of sex differences. Though perhaps the methods were too coarse. In any case, aspiring neuroimagers of social intelligence just need to go to a Perl Mongers meeting to recruit cognitive outliers! Labels: Psychology

Friday, July 10, 2009

As nation gains, 'overweight' is relative:

The little number on the tag on a pair of pants that indicates size can mean a lot to a person, and retailers know it. When I was in high school one particularly dumb classmate was very excited to learn that one's weight is marginally lower at higher elevations. She gave me a blank look when I pointed out that mass remains the same. I tactfully left out the fact that there wouldn't be a change in volume or surface area either.... H/T Michelle Cottle, who lets slip that she has remained the same size and shape since high school. Labels: Psychology

Wednesday, June 17, 2009

Readers might be interested in a new paper in PLoS ONE, General Intelligence in Another Primate: Individual Differences across Cognitive Task Performance in a New World Monkey (Saguinus oedipus): Readers might be interested in a new paper in PLoS ONE, General Intelligence in Another Primate: Individual Differences across Cognitive Task Performance in a New World Monkey (Saguinus oedipus):Individual differences in cognitive abilities within at least one other primate species can be characterized by a general intelligence factor, supporting the hypothesis that important aspects of human cognitive function most likely evolved from ancient neural substrates. Labels: Psychology, psychometrics

Saturday, April 18, 2009

Who I Am Depends on How I Feel: The Role of Affect in the Expression of Culture:

We present a novel role of affect in the expression of culture. Four experiments tested whether individuals' affective states moderate the expression of culturally normative cognitions and behaviors. We consistently found that value expressions, self-construals, and behaviors were less consistent with cultural norms when individuals were experiencing positive rather than negative affect. Positive affect allowed individuals to explore novel thoughts and behaviors that departed from cultural constraints, whereas negative affect bound people to cultural norms. As a result, when Westerners experienced positive rather than negative affect, they valued self-expression less, showed a greater preference for objects that reflected conformity, viewed the self in more interdependent terms, and sat closer to other people. East Asians showed the reverse pattern for each of these measures, valuing and expressing individuality and independence more when experiencing positive than when experiencing negative affect. The results suggest that affect serves an important functional purpose of attuning individuals more or less closely to their cultural heritage. More in ScienceDaily: ... And elevated mood even shaped behavior, allowing volunteers to act "out of character." These findings suggest that people in an upbeat mood are more exploratory and daring in attitude — and therefore more apt to break from cultural stereotype. That is, Asians act more independently than usual, and Europeans are more cooperative. Feeling bad did the opposite: It reinforced traditional cultural stereotypes and constrained both Western and Eastern thinking about the world. I think these data are interesting in light of the sort of argument presented in works such as The Moral Consequences of Economic Growth. The standard model here is that cultural openness correlates with economic growth, while stagnation results in retrenchment. Labels: History, politics, Psychology

Tuesday, March 31, 2009

In A Farewell to Alms, Gregory Clark provides data on interest rates to show that Europeans gradually developed lower time preferences. In other words, they were more likely to delay gratification and plan for the future -- paying back loans, for example. He also interprets data on wills as showing that most people of English descent today are the genetic legacy of the middle class, the poor and the aristocracy mostly having failed to reproduce themselves. That leaves us with a society where the average person maximizes their long-term material welfare much better than their counterparts would have in the Middle Ages or before. There appears to be somewhat of a drawback, though: doing so makes you more miserable over the long term.

John Tierney recently reviewed a series of studies on how the intensity of guilt and regret change over time. Read the most recent article for free here, which contains five related studies. The journal article and Tierney's write-up are brief and straightforward, so I own't belabor the details here. Basically, in the short term, indulgence-driven guilt stings more than prudence-driven regret, and this motivates us toward virtuous behavior, such as delaying material gratification. In the long term, though, guilt has faded away and regret over missing out on life's pleasures weighs more heavily on our mind. Oddly, then, maximizing long-term material well-being minimizes long-term hedonic well-being. If the big shift to low time preferences was as recent as Clark suggests -- during the Modern and especially Industrial period -- then perhaps our brain's pleasure or reward system hasn't had enough time to rewire itself to make us feel warm and fuzzy about having saved, abstained, and done the prudent thing in the past. Rather, since all other human groups before the big change, and certainly other primate groups, had very high time preferences, the reward system is probably designed to make us feel happy as we pour over a mental photo album that's stuffed with memories of irresponsible fun and indulgence. Hey, no one ever said that changing the world and getting shit done was going to be emotionally uplifting. I'd like to see follow-up studies focus on individual differences in how strongly they are motivated by guilt vs. regret. Most personality questionnaires measure something called excitement seeking or novelty seeking, as well as impulsiveness. We might predict that impulsive and excitement-seeking people are more motivated by avoiding regret than avoiding guilt, which leads them toward indulging more in the present. You could re-do all of the five studies in the article above, but using personality traits as predictor variables. If different parts of the brain light up when we feel guilt vs. regret, you could see if impulsive and excitement-seeking people showed greater responses to regret-based scenarios than guilt-based scenarios. (E.g., they read a story about someone else feeling these emotions, they reflect on an episode from their own lives, they see pictures of the faces of others expressing these emotions, and so on.) On an applied level, if you suffer from "hyperopia" -- planning to much for your material future -- you can push yourself to indulge merely by reflecting on how you may in 20 years regret missing out on having fun now. If you remind yourself that "You'll regret it if you don't," then you won't find yourself sighing later on about that more exciting trip you should have taken your son on, that year of working in a more fulfilling city for less pay, or that student who made a pass at you that you should have slept with. Labels: Economics, Evolutionary Psychology, Psychology

Thursday, March 19, 2009

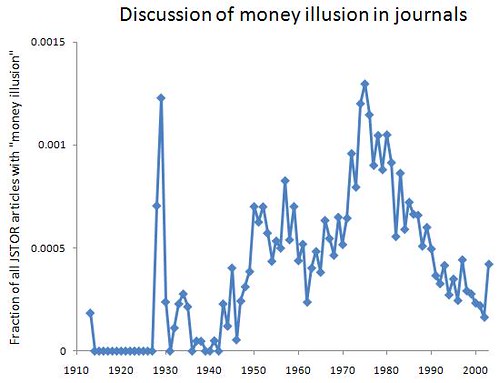

Tracking economists' consensus on money illusion, as a proxy for Keynesianism

posted by

agnostic @ 3/19/2009 02:07:00 PM

I'm probably not the only person playing catch-up on economics in order to get a better sense of what the hell is going on. Just two economists clearly called the housing bubble and predicted the financial crisis, and only one of them has several books out on the topic -- Robert Shiller, the other being Nouriel Roubini. With Nobel Prize winner George Akerlof, Shiller recently co-authored Animal Spirits, a popular audience book making the case that human psychology and behavioral biases need to be taken into account when explaining any aspect of the economy, especially when things get all fucked up. That argument would seem superflous, but economics is the butt of "assume a can-opener" jokes for a reason.

To be fair to the field, though, they point out that before roughly the 1970s, mainstream economists all believed that the foibles of human beings, as they really exist, should be incorporated into theory, rather than dismissing them as behaviors that only an irrational dupe would show. Remember that Adam Smith was a professor of moral philosophy and wrote extensively about human psychology. From what I can tell, the recent shift does not have to do with the introduction of math nerds into the field, since the man who laid most of the formal foundation -- Paul Samuelson -- was squarely in the "psychology counts" camp. It looks more like a subset of math nerds is responsible -- call them contemptuous autists, as in "What kind of idiot would engineer the brain that way?!" (Again, these are very rough impressions, and I'm winging it in categorizing people.) Of the many ideas relevant to understanding financial crises, a key one from the old school period is money illusion, or the idea that people think in terms of nominal rather than real prices. For example, if the nominal prices of things you buy go down by 20%, you won't be any better or worse off in real terms if your nominal wages also go down by 20%. However, most people don't think this way, and would see a 20% pay-cut in this context as a slap in the face, a breach of unspoken rules of fairness. This is an illusion because a dollar (or euro, or whatever) isn't a fixed unit of stuff -- what it measures changes with inflation or deflation. It's the same reason that women's clothing designers use fuzzy units of measurement -- "sizes" -- rather than units that we agree to fix forever, such as inches or centimeters. By artificially deflating the spectrum of sizes, a woman who used to wear a size 10 now wears a size 6, and she feels much better about herself, even if she has stayed the same objective size or perhaps even gotten fatter. How could they be so stupid to fall for this, when everyone knows it's a trick? Who knows, but they do. Similarly, everyone knows that inflation of prices exists, and yet the average person still falls victim to money illusion, and economic theory will just have to work that in, just as evolutionary theory must work in the presence of vestigial organs, sub-optimally designed parts, and other things that make engineers' toes curl. Animal Spirits provides an overview of the empirical research on this topic, and it looks like there's convincing evidence that people really do think this way. To take just one line of evidence, wages appear to be very resistant to moving downward, even when all sorts of other prices are declining, and interviews and surveys of employers reveal that they are afraid that wage cuts will demoralize or otherwise antagonize their employees. This is obviously a huge obstacle during an economic crisis, since firms will find it tough to hemorrhage less wealth by lowering wages -- even only by lowering them enough to match the now lower cost-of-living. The way Akerlof and Shiller present the history of the idea, it was mainstream before Milton Friedman and like-minded economists tore it down starting around 1967 and culminating by the end of the 1970s, although they hint that the idea may be seeing a rebirth. As an outsider, my first question is -- "is that true?" I searched JSTOR for "money illusion" and plotted over time the fraction of all articles in JSTOR that contain this term:  Although the term was coined earlier, the first appearance in JSTOR is a 1913 article by Irving Fisher, and the surge around 1928 - 1929 is due to commentary on his book titled Money Illusion. Academics were still talking about it somewhat through 1934, probably because the worst phase of the Great Depression spurred them to try to figure out what went wrong. The idea becomes more discussed during World War II, and especially afterwards when Keynesian thought swept throughout the academic and policy worlds within the developed countries. In the mid-'50s, the term decelerates and then declines in usage, although the policies of its believers are still in full swing. I interpret this as showing that from the end of WWII to the mid-'50s, their ideas were debated more and more, and after this point they considered the matter settled. Starting in the mid-late-1960s, though, the term begins to surge in usage to even greater heights than before, peaking in 1975, and plummeting afterward. This of course parallels the questioning of many of the ideas taken for granted during the Golden Age of American Capitalism, and the transition to Friedman-inspired thinking in academia and Thatcher-inspired thinking in public policy. Party affiliation clearly does not matter, since the mid-'40s to mid-'60s phase showed bipartisan support for Keynesian thinking, and after the mid-'70s there was also a bipartisan consensus on theory and policy applications. I interpret this second rise and fall as a re-ignited debate that was then considered a resolved matter -- only this time with the opposite conclusion as before, i.e. that "everyone knows" now that money illusion is irrational and therefore doesn't exist. The data end in 2003, since there's typically a five-year lag between the publication date of an article and its appearance in JSTOR. So, unfortunately I can't use this method to confirm or disconfirm Akerlof and Shiller's hints that the idea might be on its way to becoming mainstream in the near future. Whatever the empirical status of money illusion turns out to be -- and it does look like it's real -- the bigger question is whether or not economists will return to a serious, empirical consideration of psychology -- both the universal features (however seemingly irrational), as well as the individual differences that allow Milton Friedman to easily work through a 10-step-long chain of backwards induction, but not a typical working class person, who isn't smart enough to get into college (and these days, that's saying a lot). If all the positive press, not to mention book deals, that Shiller is getting are any sign, the forecast looks optimistic. Labels: Economics, intellectual history, politics, Psychology

Monday, December 29, 2008

Spontaneous Facial Expressions of Emotion of Congenitally and Noncongenitally Blind Individuals:

The study of the spontaneous expressions of blind individuals offers a unique opportunity to understand basic processes concerning the emergence and source of facial expressions of emotion. In this study, the authors compared the expressions of congenitally and noncongenitally blind athletes in the 2004 Paralympic Games with each other and with those produced by sighted athletes in the 2004 Olympic Games. The authors also examined how expressions change from 1 context to another. There were no differences between congenitally blind, noncongenitally blind, and sighted athletes, either on the level of individual facial actions or in facial emotion configurations. Blind athletes did produce more overall facial activity, but these were isolated to head and eye movements. The blind athletes' expressions differentiated whether they had won or lost a medal match at 3 different points in time, and there were no cultural differences in expression. These findings provide compelling evidence that the production of spontaneous facial expressions of emotion is not dependent on observational learning but simultaneously demonstrates a learned component to the social management of expressions, even among blind individuals. Also see ScienceDaily. Labels: Psychology

Thursday, December 18, 2008

'Gross' Messaging Used To Increases Handwashing, Fight Norovirus:

In fall quarter 2007, researchers posted messages in the bathrooms of two DU undergraduate residence halls. The messages said things like, "Poo on you, wash your hands" or "You just peed, wash your hands," and contained vivid graphics and photos. The messages resulted in increased handwashing among females by 26 percent and among males by 8 percent. Most human cognition is implicit, and we're really not as amenable to rational appeals we like to think we are. Remember this research?: We examined the effect of an image of a pair of eyes on contributions to an honesty box used to collect money for drinks in a university coffee room. People paid nearly three times as much for their drinks when eyes were displayed rather than a control image. This finding provides the first evidence from a naturalistic setting of the importance of cues of being watched, and hence reputational concerns, on human cooperative behaviour. Labels: Psychology

Friday, October 10, 2008

Slate has some very interesting excerpts from The Big Necessity: The Unmentionable World of Human Waste and Why It Matters posted today. The reality that a great deal of the illness in today's world is caused by fecal contamination is well known. The proximate cause of many minor illnesses is mild food poisoning, but food poisoning itself is ultimately generally caused by poor hygiene. Â It seems straightforward to imagine that poor sanitation can be a significant drain on economic productivity. But on this weblog we've also addressed the possibility of pathogens playing a role in changing personalities and temperaments. In Farewell to Alms Greg Clark made the case that the greater mortality due to poor hygiene shifted the death schedule and so relieved Malthusian pressure. In contrast, East Asia was notable for having a rather efficient system of human waste disposal and reuse, and the concomitant lower death rate resulted in more Malthusian pressures and lower per capita wealth. One of the positive developments in the historical disciplines has been the a shift away from narrative annals describing political and social happenings on the elite level, to a more thorough quantitative analysis of the state of mass culture and material condition. Both perspectives are important; in War and Peace and War Peter Turchin reports military historical research which suggests that the presence of Napoleon at a battle was the equivalent of the French having 30% more troops! This suggests that to some extent Great Men do matter, but one must remember that the emergence of parvenu such as Napoleon was conditioned upon the Malthusian economic and social stresses of late 18th century France.

But the Slate piece also puts the spotlight on the particular nature of human psychology and its relation to feces: Reuse works better when it involves camouflage. This technique is used, appropriately for a militarized country, in Israel. During a presentation at a London wastewater conference, a beautiful woman from Israel's Mekorot wastewater treatment utility, who stood out in a room full of gray suits, explained that they fed the effluent into an aquifer, withdrew it, then used it as potable water. "It is psychologically very important," she told the rapt audience, "for people to know that the water is coming from the aquifer." This is a clever way of getting around fecal aversion. Not having wastewater-and not wasting water-would be better still. I'm sure this is not surprising to most readers, especially if you have read something like Paul Bloom's Descartes' Baby. One can posit pretty straightforward adaptive reasons for why humans tend to have an aversion to feces and rot; but whatever the ultimate root of these instincts they're pretty universal. Of course, like eating spicy peppers humans seem able to get around these hardwired instincts, or leverage them in some way so as to invert their effect. For example, the application of feces upon wounds had a long history in pre-modern medicine, all the way back to the Egyptians. The detailed inferences can sometimes be surprising, but the point is that though most humans reflectively accept the atomic and molecular understanding of the world, reflexively they are Aristotelians. Intuitions can be overcome or unlearned to a great extent, but if one wishes to reform the human outlook one needs to take into account its a priori biases. The human mind is not amorphous clay which one can mold into any shape in an infinite manner of ways, rather, it is a collection of blocks and units which likely have innumerable combinatorial possibilities, but certainly a finite number subject to various constraints and conditions. The cultural variation in attitudes which is overlain on human universals illustrates the reality that despite innate tendencies human minds are elastic. Consider: Sanitation professionals sometimes divide the world into fecal-phobic and fecal-philiac cultures. India is the former (though only when the dung is not from cows); China is definitely and blithely the latter. Nor is the place of excrement confined to the fields. It has featured prominently in Chinese public life and literature for at least a thousand years. The recycling of "night soil" mentioned in the Slate piece was also highly developed in Tokugawa Japan. Not only did the practice increase crop yields so that a large population was feasible with pre-modern agricultural techniques, but it had a byproduct effect of fostering public hygiene and reducing the disease burden (noted above). As far as the Chinese go, the attitude toward utilization of human waste, as well as other cultural traits such as minimal food taboos, illustrate the deep strain of pragmatic rationalism which many early or proto-Enlightenment philosophers so admired. As for the South Asia tendency to extend and elaborate on human intuitions and tendencies as opposed to channeling toward material ends, if you have nothing good to say, say nothing at all.... Labels: Evolutionary Psychology, History, Psychology

Sunday, September 14, 2008

Update: Another post where I've transcribed the highest correlations for each trait.

Jason pointed me to this Guardian piece, US personalities vary by region, say researchers. It's pretty thin on the details, but luckily the original paper can be found online in full, A Theory of the Emergence, Persistence, and Expression of Geographic Variation in Psychological Characteristics. I haven't read the whole thing, nor do I know much about personality, so I have put the maps which illustrate regional variation in traits below the fold. But I do want to note the correlations between Openness and the following metrics on the state level: % Arts and entertainment = 0.23 % Computer and mathematical = 0.24 Patent production per capita = 0.28 * Controlling for income, race, sex, college degree, and proportion of state population living in city with one million or more residents. p < 0.05.      Labels: Personality, Psychology

Wednesday, August 27, 2008

Update: Overcoming Bias responds.

Reading excerpts of the memoirs of the Mughal warlord Babur, founder of the dynasty in India, I note that his father was an alcoholic. This is not exceptional in the lineage, the Emperor Jahangir's reign was marred by problems due to his alcoholism. Nevertheless, these individuals were faithful Muslims by all their other actions. In fact, I have noted before that the early Arab Caliphs, who were responsible for the spread and dominance of Islam across what we now term the Islamic world, were by an large appreciators of wine. I was struck by Babur's mention of his father's weakness for alcoholism because I recently read about Glorious Revolution. As you know James II lost his throne because of his sincere Roman Catholicism. He rejected apostasy as the price of regaining his position. If his private correspondences did not attest to his sincerity, his public actions surely did. Nevertheless, despite James' relative religious seriousness and moral qualms for a ruler of his day (in contrast to his brother, Charles II), he retained his mistress as was customary for British kings. I point out these moral failings because I have always been struck by starkness of human hypocrisy and its incongruity in the face of avowed beliefs. How can a sincere Muslim drink alcohol? Have can a sincere Christian engage in sexual vice? One might infer from their actions that men such as James II were cynics, but as I note above James was willing to greatly reduce his chances of retaking his throne for the sake of his sincere religious commitment. I have been oversimplifying in reducing James' moral quandary to these two issues, contrasting the manifest evidence of his religious commitment in the face of inducements to convert to Protestantism with his sexual practices which contradicted Christian teaching. There are certainly other complicating factors, but I think the point stands that sin is common, and human weakness in the face of contradiction the norm. Mens' hearts are easily divided, and simultaneously sincere in their inclinations. All this leads to the point that I believe far too many of those of us who wish to comprehend human nature scientifically lack a basic grasp of it intuitively. I have never truly believed in an awesome God of history, so my hypothetical behavior in reaction to this transcendent truth is conjecture. I know how I believe I will behave, but I have no true intuitive grasp. Over the years I have come to the conclusion that many atheists simply lack a deep understanding of what drives people to be religious, and that our psychological model of those who believe in gods is extremely suspect. The "irrationality" and "contradiction" of human behavior may be rendered far more systematically coherent simply by adding more parameters into the model. Too many "rationalists" insist on the primacy of their own spare and minimalist axioms, while normal humans may lack both the eloquence and intuition to communicate to their "rationalist" interlocutors that they are missing key structural variables. When I engage with these sorts of issues with readers of Overcoming Bias or Singularitarians my suspicions beocme even stronger because I see in some individuals an even greater lack of fluency in normal cognition than my own. What I am lacking in becomes all the more obvious when I see with my own eyes those who are even more damned in the eyes of God. From all this one should not conclude that I see the reality of the mystical truths of gods before unveiled before my eyes. I do not. Rather, my point is that understanding human nature is not a matter of fitting humanity to our expectations and wishes, but modeling it as it is, whether one thinks that that nature is irrational or not within one's normative framework. Readers of this weblog are well aware and conscious of this issue; that is why I believe it is important to broach topics such as IQ because this variable matters, and most of us would wish that retardation was simply not a phenotype which was extant, but we know that that will not be so. Similarly, those of who are psychologically atypical enough to be rather obsessed with modeling human nature into a framework which is analytically tractable need to be more conscious of the alien complexities of the normal human mind, in all its baroque paradox. Labels: Psychology

Friday, August 15, 2008

Finally: A book on standardized testing your hippie girlfriend will enjoy

posted by

Herrick @ 8/15/2008 10:50:00 PM

Daniel Koretz of Harvard's Graduate School of Education took the lecture notes from his course, "Methods of Educational Measurement," and turned it into a book: Measuring Up: What Educational Testing Really Tells Us. It's readable, filled with funny anecdotes, and contains absolutely nothing that will be new to regular GNXP readers.

But because Koretz takes the math and most of the controversy out of the debate over standardized tests, he has time to actually drill home a couple of important points repeatedly: Modern standardized tests have little bias, are pretty reliable, and while they don't tell you everything about a person or a school or a city, they are good for making rough predictions. Hence, the title of this blog post: Feel free to recommend Measuring Up as a "baby steps" book for your favorite sociologist or folk guitarist. Koretz waves his political correctness card early on, letting us know that "IQ [is] just one type of score on one type of standardized test..." and he lets us know about the "pernicious and unfounded view that differences in test scores between racial and ethnic groups are biologically determined." But you already knew he was going to say that, right? And in an unintended parody of blank-slatism, he has a chapter entitled "What influences test scores" that never once mentions genetic factors, even to dismiss them. Koretz does a great job dodging such troubling questions while focusing on what he really wants to talk about, with solid, candid chapters entitled "Validity," "Inflated Test Scores," "Error and Reliability," chapters that actually do a good job of conveying big ideas about non-experimental social science in jargon-free prose. Kudos to him for doing so. Treat it as a book on the narrow field of psychometrics and its link to policy, not as a book on the broader field of standardized tests per se and its link to policy: You'll spend a lot less time grinding your teeth. Labels: Psychology

Wednesday, July 09, 2008

Have multiple intelligence theories really been disproven?

posted by

birch barlow @ 7/09/2008 04:46:00 PM

[this is a slightly edited version of what was originally a haloscan comment]